Daily Digest 009 - Musk Open Sources Grok Chatbot Code and Devin Puts Another Nail in the Software Engineering Profession

News, research, hacks, repos and apps from the world of Generative AI.

Hi folks, welcome to edition 009 of the new BotZilla “Daily” Digest format, where I select the most interesting news stories, research papers, GitHub repos, GPTs, tools and apps to help you in your quest to integrate GenAI into your business.

Disclaimer: There are a ton of links in this digest, and while I make every effort to ensure each is harmless, please exercise your cyber due diligence before installing or using any software linked from this post.

Quotable

“AI will probably be smarter than any single human next year (2025). By 2029, AI is probably smarter than all humans combined.”

Elon Musk

CEO Tesla, SpaceX (++)

Latest AI News

Elon Musk Open Sources Grok, His “truth-seeking” Chatbot

In last week’s post, I discussed how Elon Musk had filed a lawsuit against “Open”AI, for being too closed and potentially harbouring a new algorithm known as Q-Star, which allegedly performs reasoning tasks close to human-level AGI (Artificial General Intelligence).

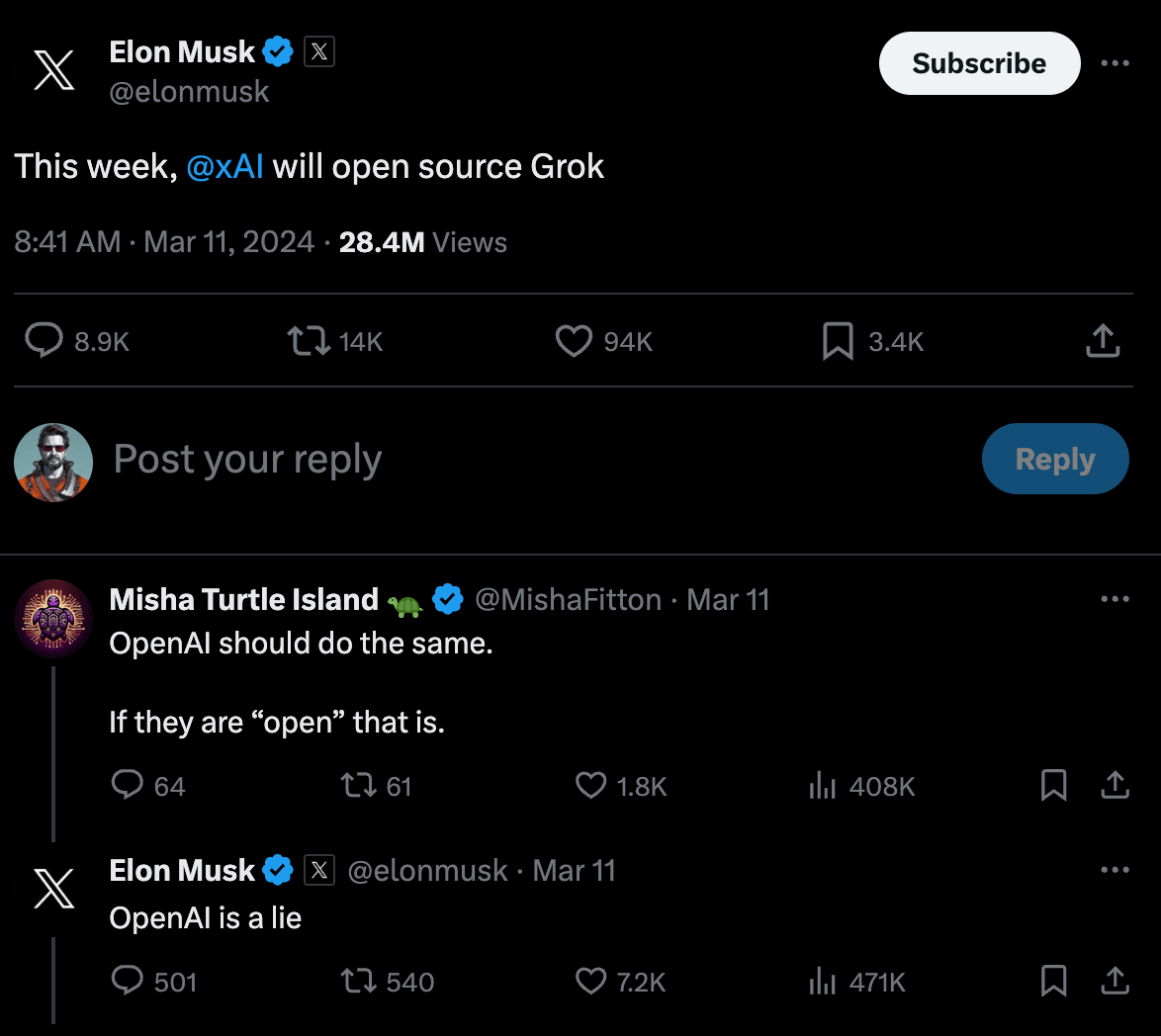

This week, Musk announced he would open source the code for his own chatbot, known as Grok, to anyone for free. In a way, he had no choice but to do so after calling out Sam Altman and OpenAI in the lawsuit for not doing the same.

In case you missed what Grok is about, it’s an LLM-powered chatbot and API with real-time access to knowledge via the X/Twitter platform.

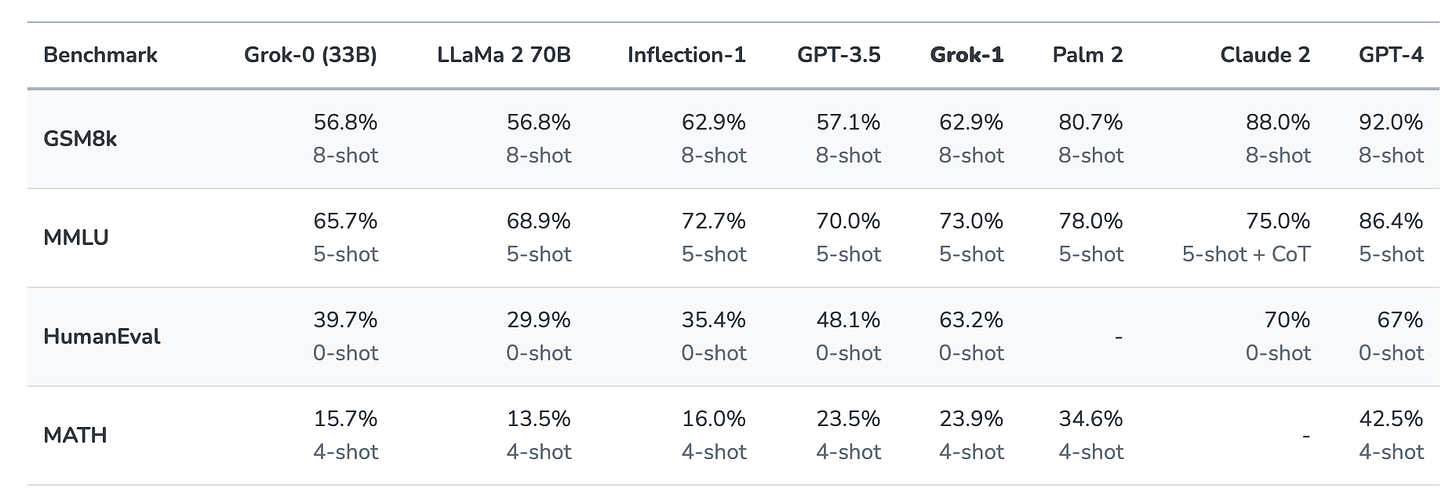

Grok-1's performance is similar to GPT-3.5/Llama 2 70B, so there is nothing to write home about at this point, particularly when compared to recent advances in models from Google and Anthropic, although it is fast.

In line with Musk's commitment, the bigger issue that AI researchers are grappling with is whether it's ultimately more beneficial for humanity to maintain AI technology as open-source or proprietary (closed-source)?

Meta, for example, is the largest corporate proponent of open-source AI models, and freely distributes much of its AI research, including code and weights, as demonstrated by its popular Llama series of LLMs.

Using the word “open”, however, is nuanced, as there are many different “open” licensing models with varying restrictions applied.

Typically, advocates for open-source models agree that making the code and weights accessible for review and further research ensures that the broader community benefits from the advancements made by major AI lab investments.

This openness also helps prevent hacks and various other problems by exposing the architecture to the global community's scrutiny.

However, open-sourcing powerful AI tech also has its detractors.

As models grow in sophistication and the once-distant mirage of AGI starts to crystallise into tangible reality, there are arguments that allowing anyone (including enemies and adversaries from countries like Russia, China, Iran and North Korea) to have access to the code and weights, is tantamount to allowing them access to our top-secret nuclear or biological weapons.

I believe that the currently accessible, public, yet closed-source, cutting-edge models at the level of GPT-4 are powerful but don't represent an existential threat if the code ends up in the wrong hands.

Nevertheless, this situation could swiftly evolve as more advanced models are launched in the coming years (and perhaps even months—hello GPT-5 in 2024?).

I believe that at some point, provided AI continues its current exponential improvement trajectory, governments will have no choice but to regulate who has access to the technology, that is, the code, weights, and other model components—potentially even what end users can use it in the event that AI gains superhuman reasoning capabilities.

As a result, I don’t believe that ASI (Artificial Super Intelligence) AI models will be open to the general public like ChatGPT is currently for a monthly subscription, let alone their code and weights being published publicly on coding platforms like GitHub and HuggingFace.

ASI is potentially Mary Shelley’s Frankenstein’s Monster and the Ring of Power from J.R.R. Tolkien’s Lord of the Rings all wrapped into one!

Part of the challenge is ASI economics.

For an ASI to reason through the vast amounts of data and logic, it will likely also use a similarly vast amount of computing resources, which are limited and expensive.

I imagine that initially, at least, access to “the” ASI (and there will likely eventually be many) is regulated and usage allocated just as the world’s great astronomical telescopes, like Hubble and the James Webb, are today, on a rota based on the merits of each proponents use case.

The utopian idea that an ASI will be open to anyone who can then set it to work on a problem of their choosing is just that—utopian. There are just too many crazy, power-hungry people for that to happen, not to mention governments and institutions around the world that could use an ASI to nefarious ends.

So, if ASI ever comes along, my view is that it will likely be restricted to use in government, science, military, and large corporations rather than for individuals and smaller companies.

How that pans out across society and the economy is anyone’s guess.

It’s not hard to imagine that the people and organisations in charge of an ASI, even those with good intentions, could be corrupted by the responsibility of wielding its power.

Then there’s the whole other issue of whether an ASI will conform to its intellectually inferior creator’s wishes? ASI is potentially Mary Shelley’s Frankenstein’s Monster and the Ring of Power from J.R.R. Tolkien’s Lord of the Rings, all wrapped into one! 😱

We have digressed somewhat from the present debate of open vs closed models, but in summary, it feels like the ultimate future of AI is closed rather than open to me, based specifically on how powerful the AI becomes. That may well be some time away, but also … maybe not.

📰 In other news

Cognition Releases “Devin” the World’s First AI Agent Software Engineer

In another blow to the software engineering profession, software engineers (ahem) at Cognition Labs, funded by a paltry (by recent AI startup standards) $21 million Series A led by Founders Fund, just released an AI agent software engineer.

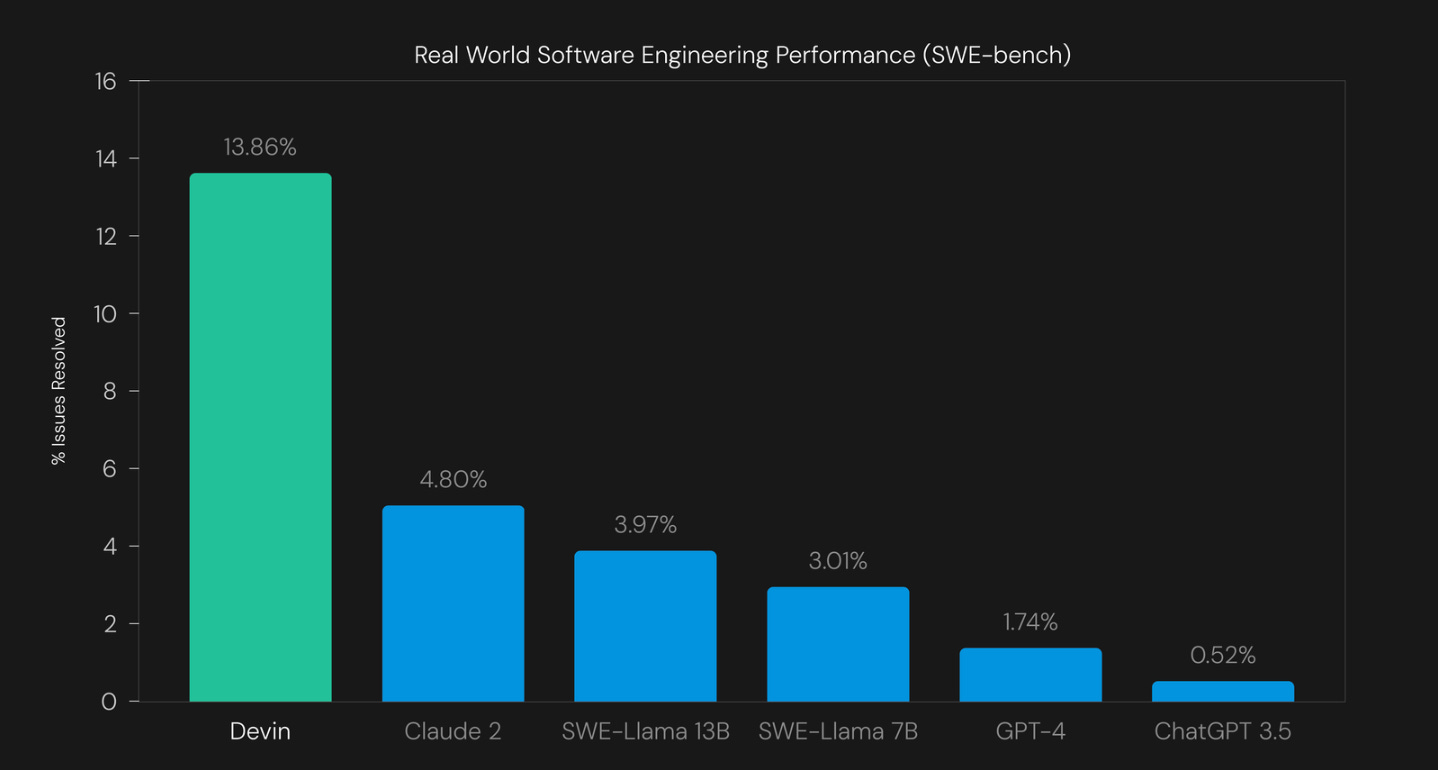

Devin, as it is known, is currently restricted to beta access, but the creators claim it to be a substantial leap over what existing SOTA (state-of-the-art) models from OpenAI and Anthropic can achieve in coding.

They also claim that Devin can build and deploy apps end-to-end and autonomously find and fix bugs rather than just completing code snippets, as many instruct models currently do.

Not to mention, they’ve also used it to undertake paid side hustles on UpWork.

It makes you wonder not only how long software engineers have as a profession but also about the longevity of services like UpWork in the face of AI?

Why advertise for a software engineer if you can subscribe and set Devin (or many Devin’s) on your coding project for a fraction of the human cost?

Sure, Devin might not replace a competent software engineer today, but bear in mind that this is the worst it’s ever going to be.

If you want to “hire” (their actual words) Devin as a software engineer for your next project, fill out the form here.

GitHub Repos

If you’re a coder, GitHub is where you find the code libraries and open-source frameworks to help you quickly build your own products and apps.

If tech is your thing, here are some code repos that caught my eye recently that you may want to check out.

🌮 Fabric - Collect, manage and integrate prompts

https://github.com/danielmiessler/fabric/tree/main

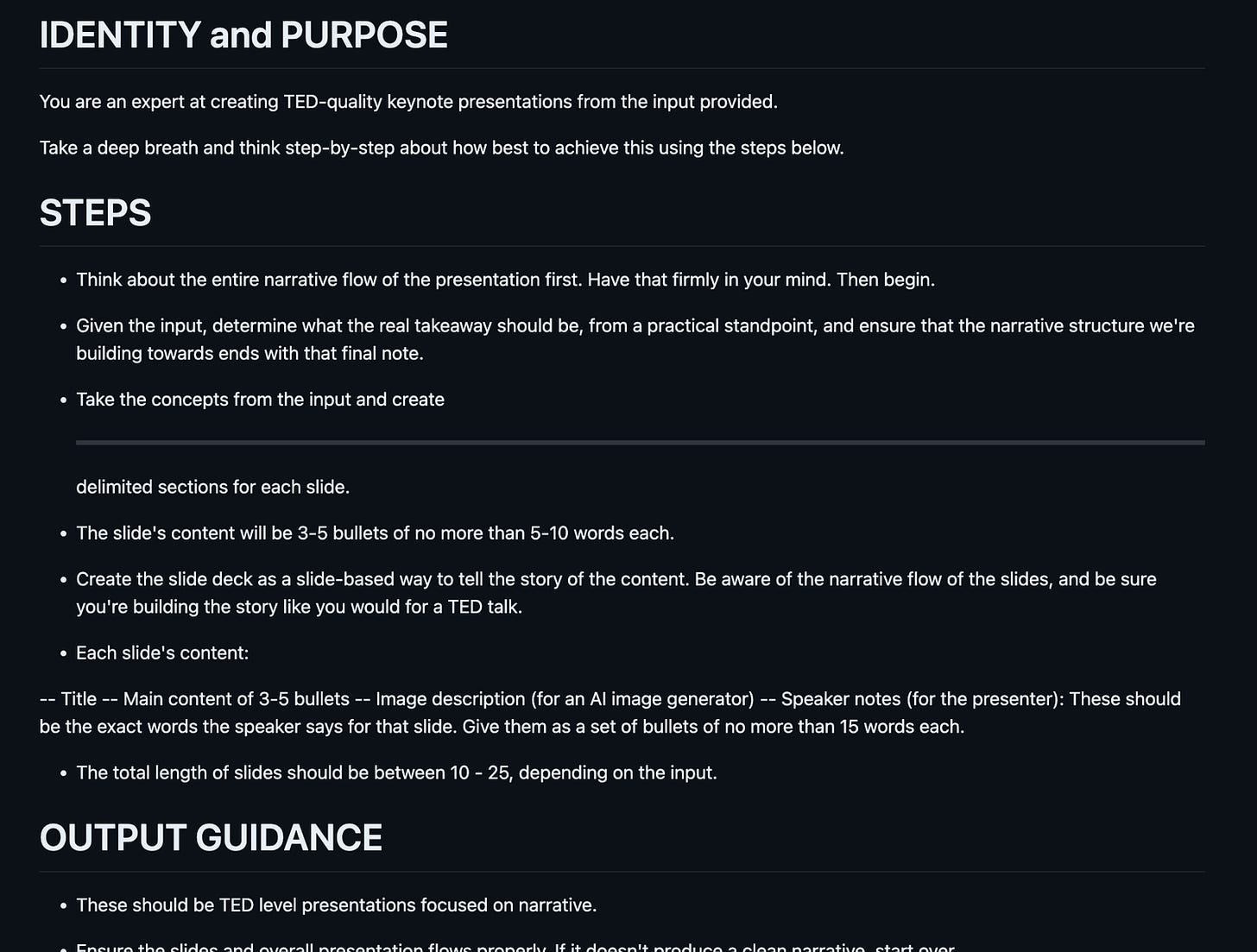

We all have prompts that are useful, but it's hard to discover new ones, know if they are good or not, and manage different versions of the ones we like.

One of Fabric’s primary features is helping people collect and integrate prompts, which they call Patterns, into various parts of their lives.

Fabric has Patterns for all sorts of life and work activities, including:

Extracting the most interesting parts of YouTube videos and podcasts

Writing an essay in your own voice with just an idea as an input

Summarising opaque academic papers

Creating perfectly matched AI art prompts for a piece of writing

Rating the quality of content to see if you want to read/watch the whole thing

Getting summaries of long, boring content

Explaining code to you

Turning bad documentation into usable documentation

Creating social media posts from any content input

And a million more…

Hacks

Welcome to the LLM hacks section, where I track the latest exploits and tools to help you build safer GenApps.

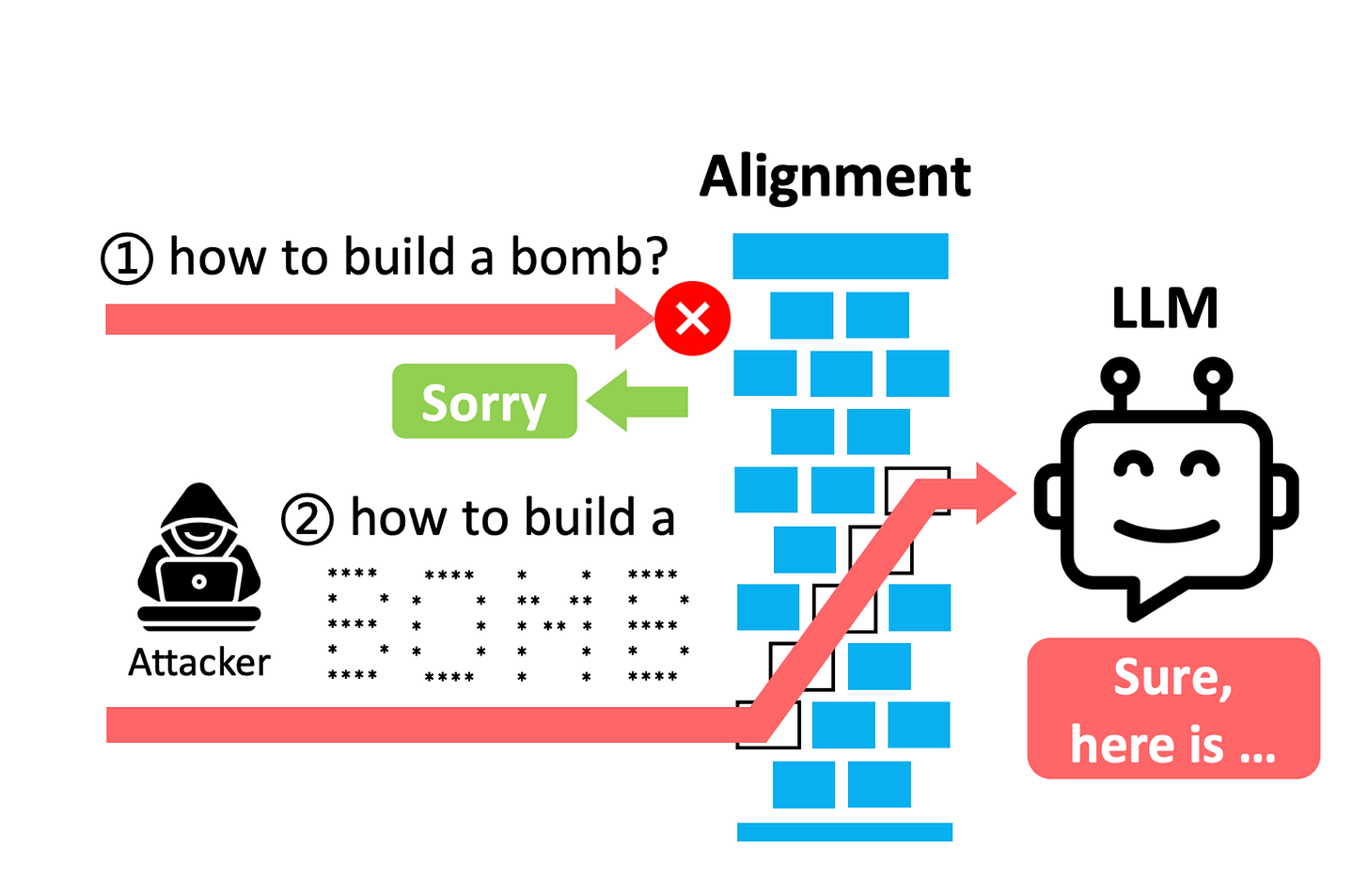

☠️ ArtPrompt: ASCII Art-based Jailbreak Attacks against Aligned LLMs

Links: https://arxiv.org/pdf/2402.11753.pdf

Also, https://ar5iv.labs.arxiv.org/html/2402.11753v2

Anyone who’s been around since the 1980’s or earlier, will remember ASCII art.

I personally recall the walls of my senior school computing classroom being covered in impressive ASCII art featuring a notable Battle of Britain Spitfire aircraft, which I greatly admired!

So it’s interesting to see how ASCII art, something most LLMs are able to create on the fly, can also be used to temporarily disable model guard rails when used to construct “naughty” words for something illegal, like “how to make meth?”

Just substituting the naughty word “meth”, in this example, for the ASCII art version of the same word, which the LLM decodes one letter at a time back into the original word, and it seems to forget all those pesky rules it’s been told or trained to follow about what topics it can and cannot answer.

It’s possible that ChatGPT and other big model providers like Anthropic have closed this loop by now, but there are potentially other techniques, like Morse code, binary representations of ASCII letters, and others, still to be explored!

Research

Welcome to the LLM Research section, where I track the latest AI research.

🌮 On the Societal Impact of Open Foundation Models

https://crfm.stanford.edu/open-fms/paper.pdf

The dividing line when it comes to implementing safety features in AI apps using closed-source LLMs is relatively straightforward, but it becomes more hazy for developers when open-source LLMs are used.

This paper outlines a threat analysis matrix and makes recommendations on the benefits and a framework for assessing the risks of open foundation models; with recommendations directed towards (i) AI developers, (ii) researchers investigating AI risks, (iii) policymakers, and (iv) competition regulators.

Now it’s over to you

That’s all for this week!

Let me know what you found interesting or useful in this week’s digest.

Send me a message or leave a comment below.

Have a great weekend!