Daily Digest 008 - Anthropic Releases Claude 3 "GPT-4 Killer" and Elon Files Legal Beef with OpenAI

News, research, hacks, repos and apps from the world of Generative AI.

Hi folks, welcome to edition 008 of the new BotZilla “Daily” Digest format, where I select the most interesting news stories, research papers, GitHub repos, GPTs, tools and apps to help you in your quest to integrate GenAI into your business.

Disclaimer: There are a ton of links in this digest, and while I make every effort to ensure each is harmless, please exercise your cyber due diligence before installing or using any software linked from this post.

Quotable

“AI will probably most likely lead to the end of the world, but in the meantime, there'll be great companies.”

Sam Altman

CEO, OpenAI

Latest AI News

Anthropic Release Claude 3, But Is It A ChatGPT Killer?

This week, Anthropic, one of the BIG 3 closed model creators (along with OpenAI and Google), released their new family of Claude 3 models.

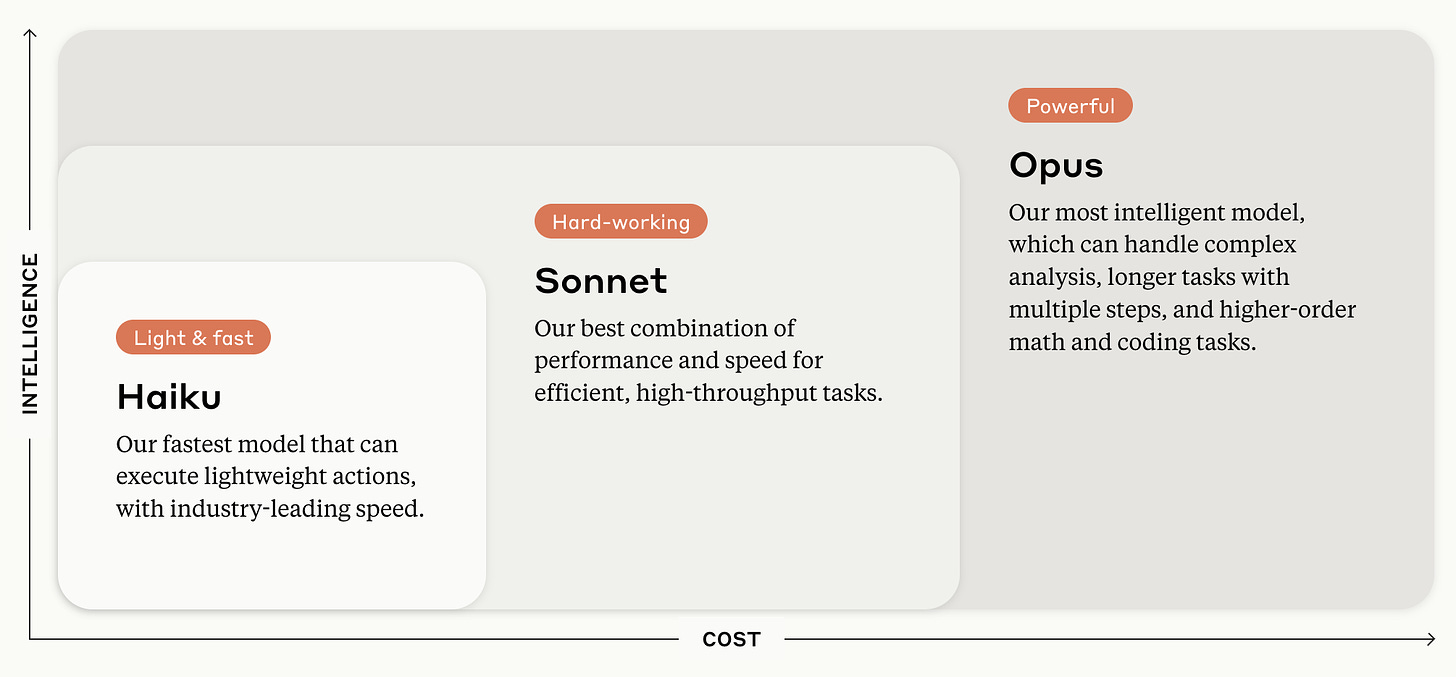

The family includes, in ascending order of capability:

Claude 3 Haiku, fast and lightweight

Claude 3 Sonnet, best performance-cost combination for most tasks

Claude 3 Opus, the most intelligent but expensive model

In brief, the models are multimodal (accepting text and vision), with a 200k context window (~140k words) upon launch. However, according to Anthropic, all three models are capable of inputs exceeding 1 million tokens (~700k words).

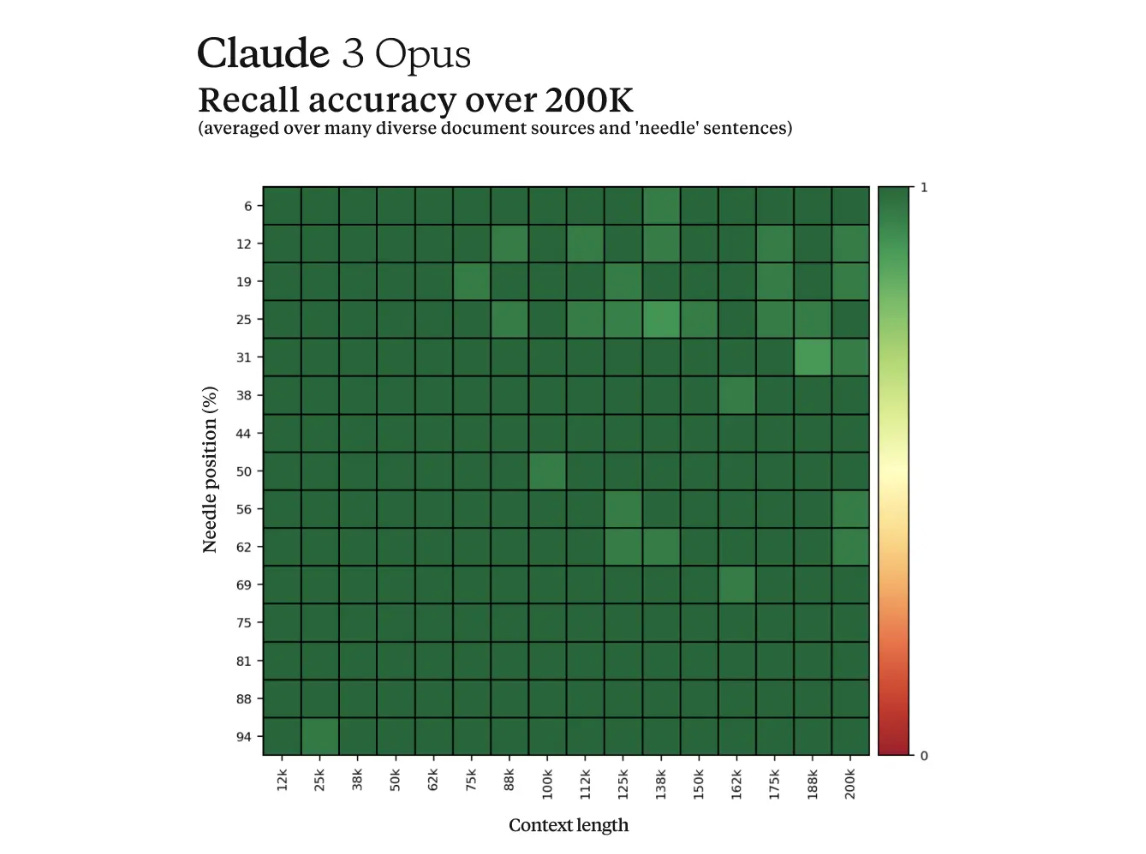

Like the recent Gemini 1.5 model from Google, Anthropic claims near-perfect recall from this large context window in “Needle In A Haystack” (NIAH) evaluations.

In the past, Anthropic has claimed that its models are built “responsibly” using a technique they call Constitutional AI. This provides the model with a set of principles and guardrails against which it can evaluate its own outputs and be what Anthropic describes as “a helpful, honest, and harmless assistant”.

Personally, I’m unsure whether this is a gimmick or, indeed, something powerful. What it does appear to have led to in the past is a higher refusal rate to answer legitimate questions compared to other models like GPT-4, which has proved to be pretty solid after the generation of many billions of tokens to users’ requests.

Anthropic seems to have taken this criticism on board and highlighted that the latest models have fewer refusals of harmless prompts in comparison to older models (like Claude 2.1).

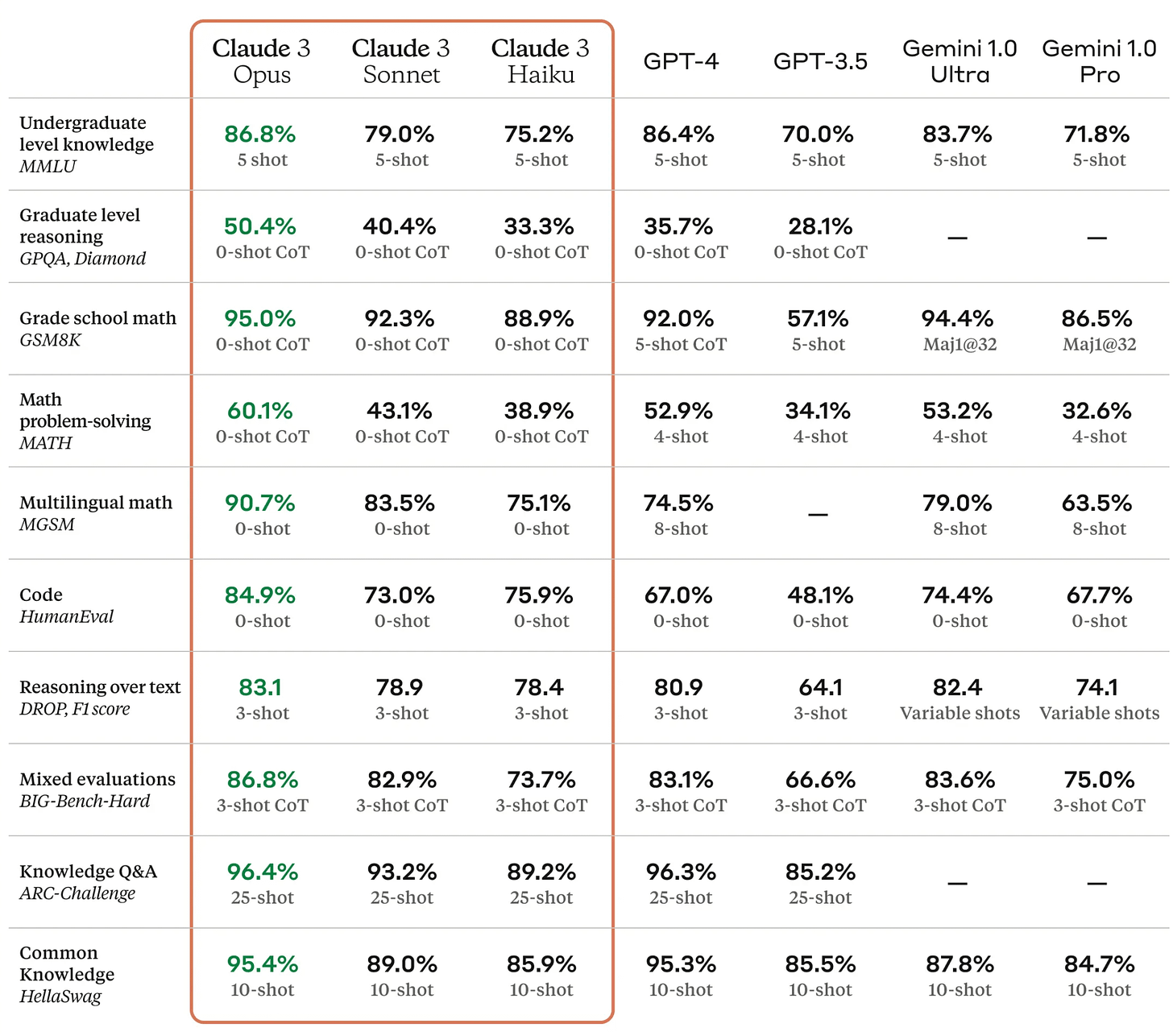

In terms of capability versus Google’s (older Gemini models) and OpenAI’s models, Anthropic claims that Claude 3 performs better, as per the table of tests below,

On paper, it looks like Claude 3 is better than the leader GPT-4 from OpenAI.

However, companies do (understandably) tend to pick and choose their benchmark tests to present their own models in the best possible light compared to their competitors.

What is apparent from the tests is that this model is at least comparable to OpenAI’s GPT-4 model and possibly a little more advanced rather than a huge leap forward in capability. In my view, it’s more of a catch-up release rather than completely demolishing GPT-4.

Will it be enough to tempt users and developers away from GPT-4 and Gemini 1.5?

It depends; the devil is in the detail, as they say.

Personally, I’ve not been hugely impressed by previous models released by Anthropic when compared to OpenAI’s offerings. This was mainly due to the combination of high refusal rates and hallucinations.

But if they have taken these criticisms from the community on board and mostly fixed them, then Claude 3 might be worth a much deeper investigation.

What is clear is that OpenAI’s near 12-month lead with GPT-4 has now been eroded to virtually nothing, and their best model right now might even be trailing the latest models from Google and Anthropic, so we should expect a reply in the near future.

Whether that’s an incremental release to GPT-4.5 with better reasoning, recall and context window, or a newly architected GPT-5 using the rumoured Q-Star (Q*) architecture (see below) is anyone’s guess, but either way, it’s going to be an exciting year full of significant advancements.

Get access to Claude 3 Sonnet here.

Claude 3 Opus is available as an upgrade with a monthly subscription.

📰 In other news

Elon Musk Files Legal Complaint Against OpenAI, Altman and Brockman

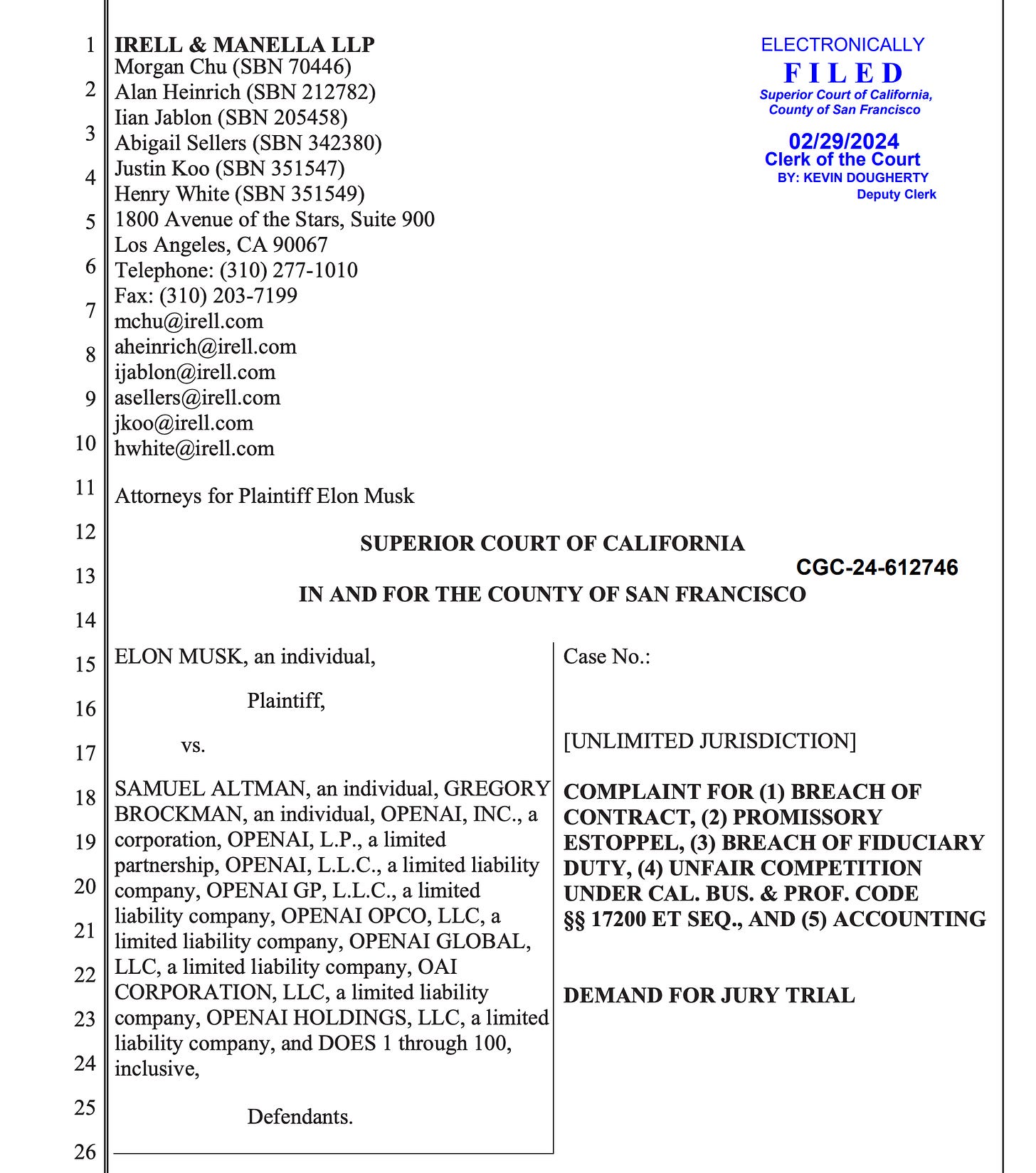

Not one to shy away from the limelight, Elon Musk filed a legal complaint against various OpenAI entities, as well as individually against Sam Altman and Greg Brockman, demanding a trial by jury to resolve his complaints.

Elon’s legal beef accuses the defendants of breach of contract, breach of fiduciary duty, unfair competition, and promissory estoppel—a legal term meaning promises or commitments made by Samuel Altman, Gregory Brockman, and/or OpenAI that were not formally enshrined in a contractual agreement but were nonetheless significant.

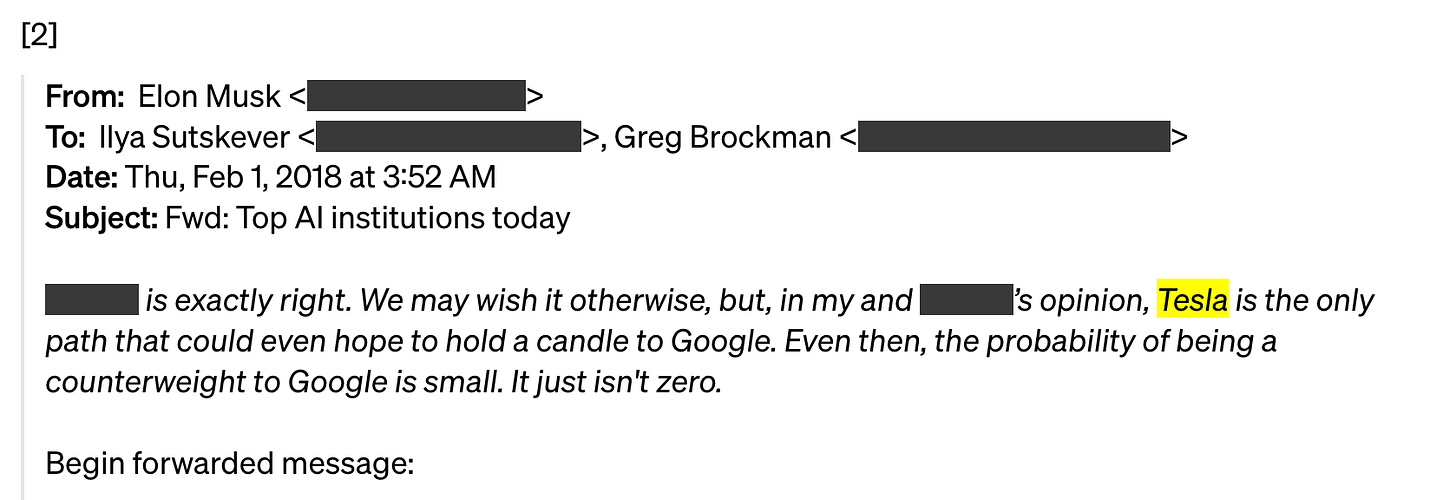

After a few days of consideration, OpenAI published its own response to Elon’s lawsuit on its website. The response included private emails from Elon Musk to various members of the OpenAI management team, allegedly showing he was openly considering pushing for OpenAI to move from a non-profit to a for-profit organisation, albeit under Tesla’s brand name, an organisation he controls.

However, what is more interesting about the legal complaint, from an AI perspective, is the obsession Elon has with AGI and specifically Q* (Q-star), rumoured to be the new AI algorithm discovered by OpenAI and in some way allegedly related to Sam Altman’s firing last November (he was quickly reinstated after a huge outcry by his team).

The document alleges that Sam Altman’s firing was due to him not being “candid” with the board about the new model’s abilities.

It’s been hinted that if Q* is considered AGI, OpenAI’s agreement with Microsoft would be terminated, or at least Microsoft wouldn’t get the benefits of any AGI developments made by OpenAI.

However, it’s plausible that if Mr Altman saw a continued need for Microsoft’s investment and resources to potentially “finish off the job” of building AGI, not to mention AGI's enormous commercial value, this could tie in with a motive for him not being “candid” with the board about its capabilities.

The legal document alleges Q* is a secretive algorithm being developed by OpenAI and suggests it may represent a significant advancement in artificial general intelligence (AGI) that could potentially surpass previous models, including GPT-4, in terms of capabilities.

The document also alleges, as reported by news sources, that several OpenAI staff members wrote a letter warning about the power of Q*, indicating its potential to be part of a more definitive example of AGI developed by OpenAI.

Furthermore, it suggests that Q* might be explicitly outside the scope of OpenAI’s license with Microsoft and should be made available for the public benefit, aligning with the founding agreement's objectives to develop AGI for the benefit of humanity rather than for proprietary commercial interests.

The document also suggests that even GPT-4's performance in various tasks might qualify it as an early (weak) form of AGI, given its ability to perform at or above human levels in tests such as the Uniform Bar Exam, the GRE Verbal Assessment, and the Advanced Sommelier examination.

Moreover, it mentions Microsoft researchers' view of GPT-4 as potentially being an early version of AGI in their infamous Sparks of AGI research paper, published in March 2023.

With respect to GPT-4 and Q* being AGI, Musk asks in his legal suit,

“B. For a judicial determination that GPT-4 constitutes Artificial General Intelligence and is thereby outside the scope of OpenAI’s license to Microsoft;

C. For a judicial determination that Q* and/or other OpenAI next-generation large language models in development constitute(s) Artificial General Intelligence and is/are outside the scope of OpenAI’s license to Microsoft;”

Fascinating stuff!

Although it’s entirely possible the legal case may be tossed out of court, as per OpenAI’s wishes, the whole saga adds more fuel to the fire of AGI being much closer than many experts outside of OpenAI, Google, and a few others think.

For more information on Q*, here’s a BotZilla post I wrote at the time:

GPTs, AI Tools and Apps

Fun and useful AI apps! 🎇

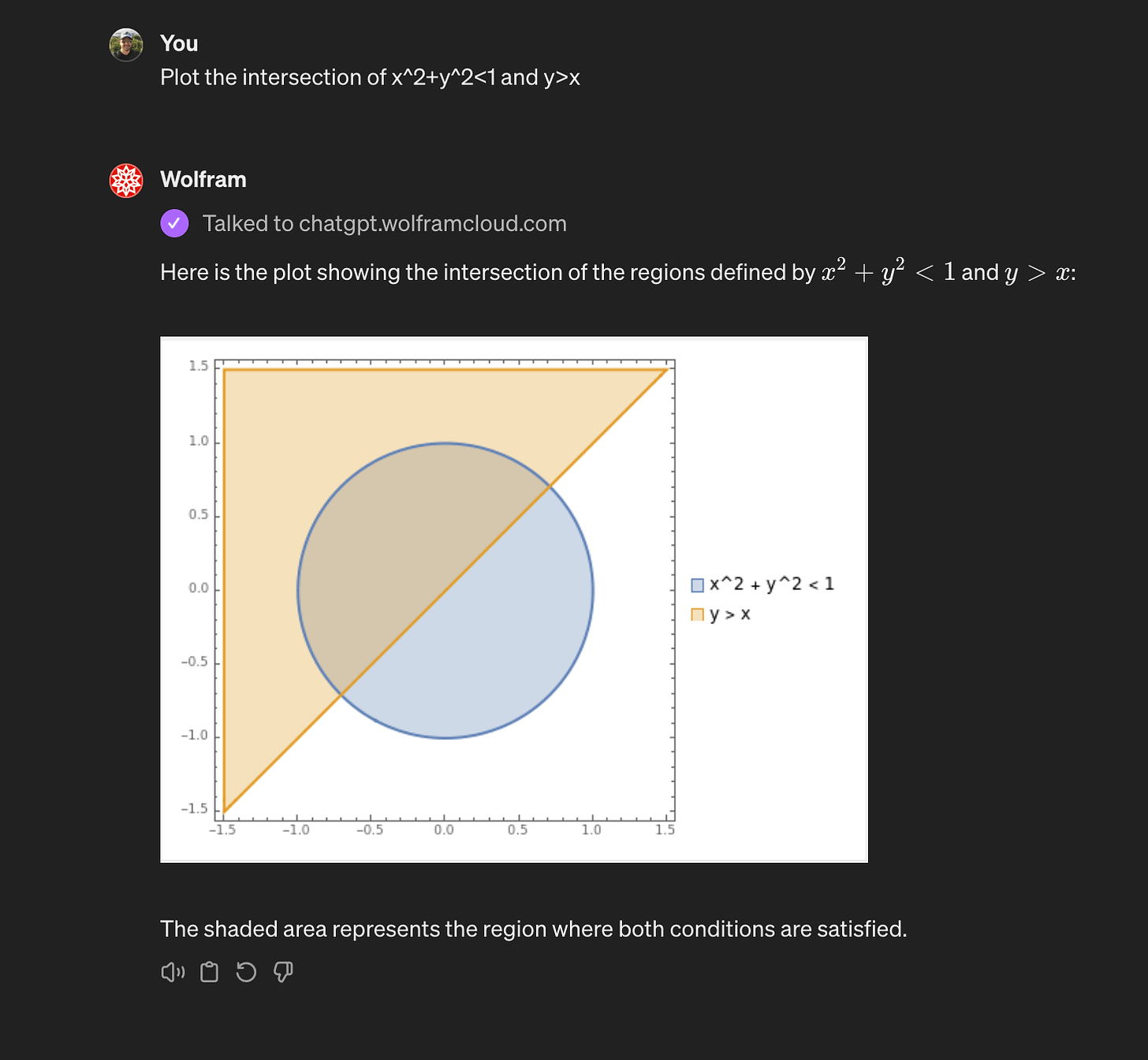

🏖️ Wolfram - GPT

Link: Wolfram

Access computation, math, curated knowledge & real-time data from Wolfram|Alpha and Wolfram Language; from the makers of Mathematica.

🏖️ TutorMe - By Khan Academy

Link: TutorMe

If you have school-age kids, you’ve probably used the Khan Academy online at some point to help them revise or understand their school work.

This GPT helps kids use the Socratic Method to solve questions without giving them the answers, so it’s a kind of constitutional educational AI tutor.

GitHub Repos

If you’re a coder, GitHub is where you find the code libraries and open-source frameworks to help you quickly build your own products and apps.

If tech is your thing, here are some code repos that caught my eye recently that you may want to check out.

🌮 Generative AI for Beginners (Version 2) - A Course

https://github.com/microsoft/generative-ai-for-beginners?tab=readme-ov-file

An 18-lesson course covering distinct topics, so start wherever you would like!

Lessons are labelled either "Learn" lessons explaining a Generative AI concept or "Build" lessons that explain a concept and code examples in both Python and TypeScript when possible.

Research

Welcome to the LLM Research section, where I track the latest AI research.

🌮 The Benefits of a Concise Chain of Thought on Problem-Solving in Large Language Models

https://arxiv.org/pdf/2401.05618.pdf

Concise Chain of Thought (CCoT) is achieved by instructing the LLM to both “think step-by-step” and ”be concise”.

The resulting model outputs reduce the overall response length of CCoT by 48.70% compared to verbose CoT resulting in token savings.

CCoT produced total cost savings of 21.85% for GPT-3.5 and 23.49% for GPT-4. These cost savings should scale linearly whilst maintaining accuracy similar to verbose CoT.

Now it’s over to you

That’s all for this week!

Let me know what you found interesting or useful in this week’s digest.

Send me a message or leave a comment below.

Have a great weekend!