Daily Digest 013 - Is Open Source AI the Way Forward, or Too Risky? Also, Llama-3, AI Music and AI Talking Faces

News, research, hacks, repos and apps from the world of Generative AI.

Hi folks, welcome to edition 013 of the new BotZilla “Daily” Digest format, where I select the most interesting news stories, research papers, GitHub repos, GPTs, tools and apps to help you in your quest to integrate GenAI into your business.

Disclaimer: There are a ton of links in this digest, and while I make every effort to ensure each is harmless, please exercise your cyber due diligence before installing or using any software linked from this post.

Quotable

“It will mean that 95% of what marketers use agencies, strategists, and creative professionals for today will easily, nearly instantly and at almost no cost be handled by the AI — and the AI will likely be able to test the creative against real or synthetic customer focus groups for predicting results and optimizing. Again, all free, instant, and nearly perfect. Images, videos, campaign ideas? No problem.”

Sam Altam (discussing the use of AI in marketing in the near future)

CEO OpenAI

Latest AI News

Is Open Source AI the Way Forward, or Too Risky?

There are two main camps when it comes to AI.

In camp 1, we have OpenAI (“Open” is a misnomer in this instance), Microsoft, Google, Anthropic, and other “big tech” and VC-funded AI companies that offer so-called closed-source AI models to customers.

In closed models, it is not possible to see under the hood and tinker with low-level model details, although some do allow a certain amount of fine-tuning using bespoke data to tailor the model further.

Closed model AI is akin to buying a ready-built car, such as a Tesla or BMW, which comes preconfigured, ready to use, and is usually highly performant.

By contrast, in camp 2, are the open source proponents; chief among them are Meta (formerly called Facebook) and Mistral, a French VC-funded AI company. These two organisations are just the tip of a very large iceberg of model creators offering literally hundreds of thousands of AI models.

By contrast, open-source AI models are comparable to building your own vehicle.

You get the parts off the shelf and put them together or tweak certain components to your requirements. For the experts, you can even ‘manufacture’ some of the components yourself and insert them into the model.

In terms of performance, open-source models are generally seen as lagging behind the most powerful closed-source frontier models, like GPT-4, Gemini Ultra or Claude Opus, by around 9-12 months, although this number has been closing recently.

There is also a raging debate in the AI community regarding whether it’s safe to continue releasing ever-more-powerful open models that any person, organisation, government, or institution can use and configure for their own purposes.

Open-source models can be run on privately owned proprietary hardware and, therefore, are outside the scope of any authority, including the original creators. In theory, this allows model guardrails to be bypassed or disabled, meaning the model can share dangerous information or undertake destructive tasks like political propaganda creation at scale.

If the underlying code for the open model is shared (in some “open” models, this is not the case), the model can act as the foundation for hostile nations or sophisticated criminal gangs to develop their own proprietary architectures.

Although models can certainly produce fake content at scale, including text, images, audio and increasingly video (see below), most people believe that current models, even GPT-4 level frontier models, of which a number form the state-of-the-art, are not yet dangerous enough to be ring-fenced from bad actors.

I think this will change, however, especially as general model capabilities approach AGI. Even now, specialised AI models, like VASA-1 from Microsoft (see below), are too dangerous to release to the general public as they could create realistically fake videos of anyone saying anything.

In a recent interview between Mark Zuckerberg (founder & CEO of Meta) and AI YouTuber Dwarkesh Patel, Zuck said that he’s not “dogmatic” about releasing every single future AI model they produce as open source if he thinks it could be dangerous.

On the plus side, AI experts claim that the benefits of open-sourcing AI are that anyone (with the required skills and financial resources) can use an open AI model to develop their own proprietary model.

This means that if a person or organisation develops a “bad” AI using open tech, another can use the same tech to develop a “good” version to fight it.

Open sourcing also stops powerful AI from being concentrated in the hands of a few people, organisations or institutions.

Whilst these arguments might appear to be logically sound, it’s worth noting that not every side is equal in a fight.

As Carl von Clausewitz, a Prussian general and military theorist, stated in his seminal 1832 work “On War,” the attacker's forces should be at least three times the force of the defender in order to win the battle. However, that translates into AI, GPUs, Benchmarks, etc.; it’s worth bearing in mind for future battles between the forces of good and evil AI systems!

There are also the economic benefits that very powerful AI models will convey to their users.

Do we want nation-states like China, Russia, North Korea, and Iran to benefit from AI developed in the West and used to compete against us? Especially when they keep their own tech secrets to themselves.

I don’t think so.

So, at some point, open-source AI is going to become closed-source and probably very tightly controlled by the governments of the countries in which it is developed; in my opinion, it’s the only logical and safe route in an extremely adversarial world as the AI tech becomes more powerful and capable.

When that happens, however, is anyone’s guess.

But it will be interesting to see how much better OpenAI’s next model release, let’s call it GPT-5, will be than the current state-of-the-art models.

It’s not too much of a stretch of the imagination to say that all models, both open and closed, released in 2025/2026, will likely need to be tightly controlled through regulation if their progress follows the same trajectory of improvement as they have to date, so make the most of them whilst you can!

📰 In other news

Meta releases Llama-3 models

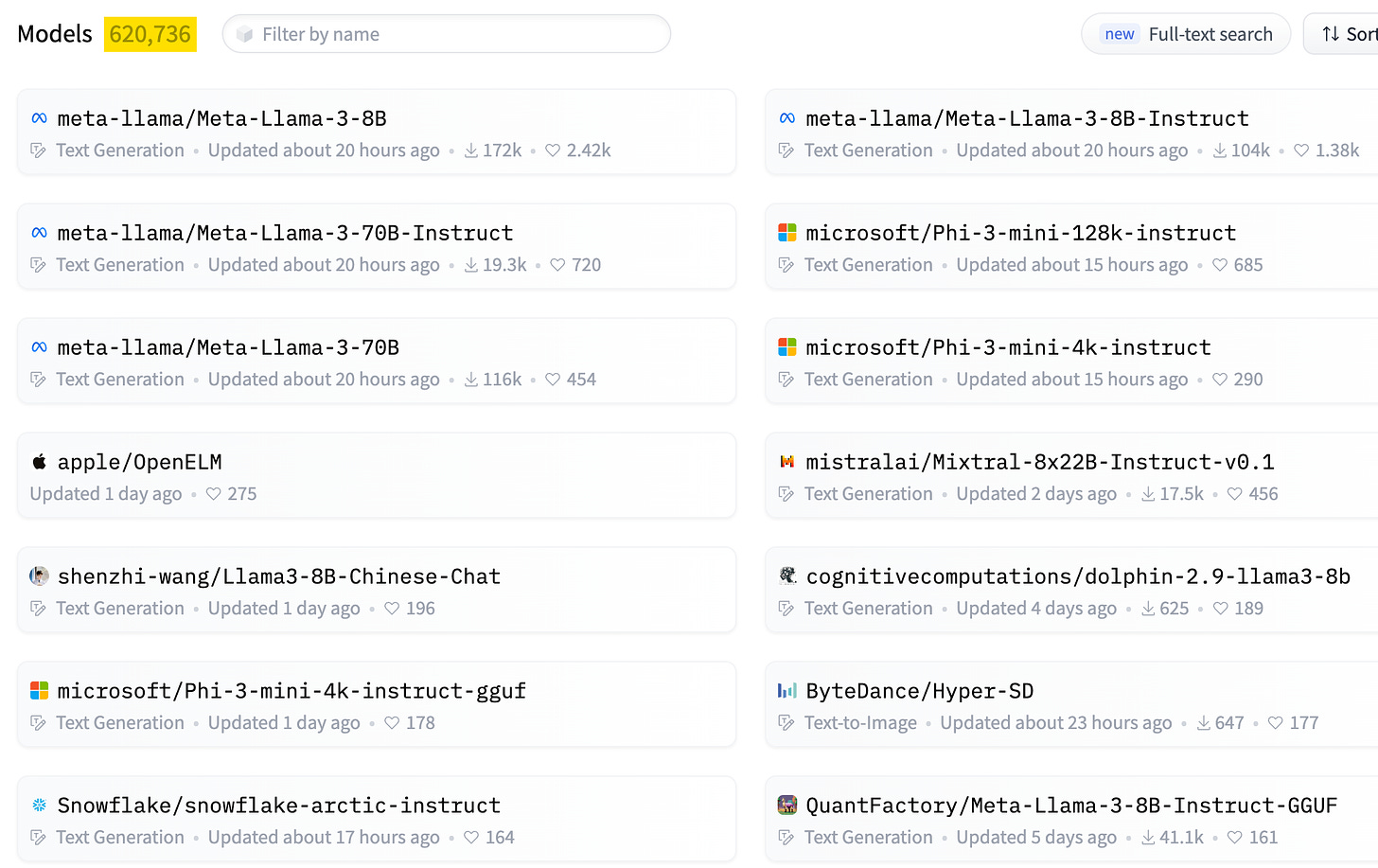

On the topic of open-source models, as soon as I pressed send on last week’s newsletter, my feed lit up with the news that Meta had released the long-awaited Llama-3 open-source model and by all accounts, it’s great!

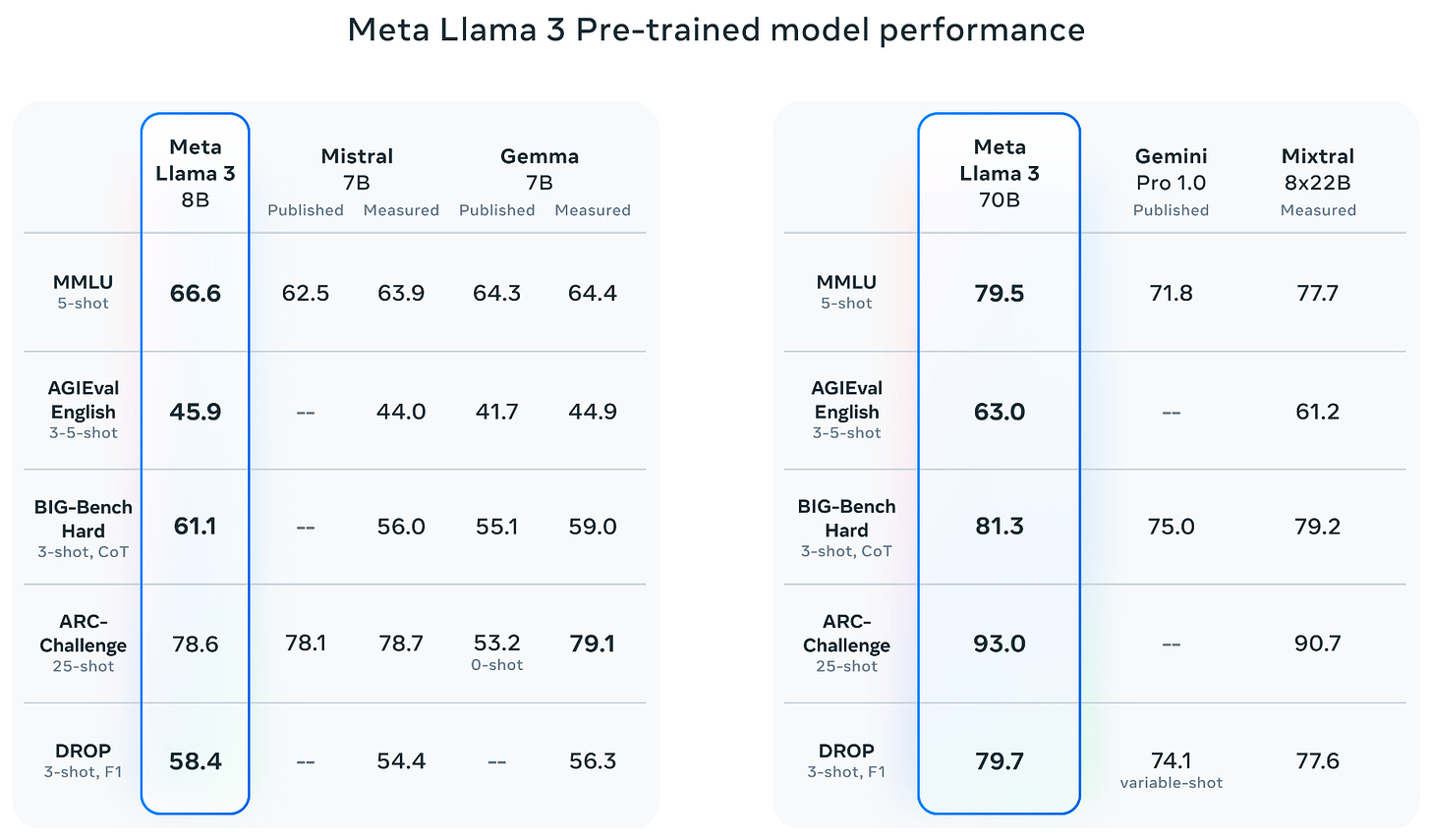

Currently released as both 8B and 70B models, both perform at the top end of most benchmarks compared to similar models in their class.

Meta also promised the release of a much larger 400B model sometime later in the year. When released, it is likely to perform at or close to GPT-4 levels.

In other words, the Llama-3 400B model will equal current state-of-the-art closed models from Google, Anthropic, and OpenAI as of writing, and it will be free.

Of course, by that time, it’s possible that OpenAI (and Google and Anthropic) will have moved on and released GPT-5 (or whatever they call their next-generation models), pushing the boundaries further again.

However, for those wanting ultimate control, Llama-3 offers best-in-class open-source AI today for the two model sizes released.

For a more detailed insight into Llama-3 8B and 70B, check out Yannic’s overview, here:

Moderna deploys 400 GPTs to help accelerate the development of new treatments

In a joint marketing video with OpenAI, Moderna outlined how it was using OpenAI’s Enterprise ChatGPT by creating over 400 GPTs to help accelerate every aspect of its business, from research and commercial to legal.

Furthermore, according to its chief legal officer, the legal department's GPT adoption rate is already 100%.

Anyone can create a GPT so long as you have one of OpenAI’s paid ChatGPT subscriptions.

AI Tools and Apps

VASA-1: Lifelike Audio-Driven AI Talking Faces Generated in Real Time

Link: https://www.microsoft.com/en-us/research/project/vasa-1/

Also: https://arxiv.org/abs/2404.10667

Microsoft announced an extremely realistic real-time talking face video generated from a single still image and a short audio clip that generates lip-synching and facial expressions for any text.

This technology paves the way for realistic, real-time avatars that emulate human conversational behaviours.

Here’s an example video from the VASA-1 site; the face and video are 100% AI-generated (i.e. no part is a real human being).

For most people, the output from VASA-1 is 100% believable and near undetectable from real-life humans. Unsurprisingly, Microsoft has not yet offered the technology for general release.

Udio and Suno Music Generation

As well as video and audio generation, music generation has come on in leaps and bounds recently.

I’ve linked to two different AI music apps from Udio and Suno that do the same thing. They enable you to generate songs and lyrics in pretty much any music style you want with a simple text prompt.

For example, here’s a hip-hop track I generated about the BotZilla newsletter with a single line of text, it’s crazy realistic:

Sadly, I’m sure this kind of tech will have a huge negative impact on music artists going forward.

Maybe not the mega-celebrities like Taylor Swift, but jobbing musicians who make a modest living creating jingles for corporate videos and social media are going to struggle as this technology becomes more capable, ubiquitous and virtually free to use.

Now it’s over to you

That’s all for this week!

Let me know what you found interesting or useful in this week’s digest.

Send me a message or leave a comment below.

Have a great weekend!