Daily Digest 012 - Has AI Progress Stalled? And is Google's new Agent Playground Any Good? Plus 3 New Frontier Model Contenders.

News, research, hacks, repos and apps from the world of Generative AI.

Hi folks, welcome to edition 012 of the new BotZilla “Daily” Digest format, where I select the most interesting news stories, research papers, GitHub repos, GPTs, tools and apps to help you in your quest to integrate GenAI into your business.

Disclaimer: There are a ton of links in this digest, and while I make every effort to ensure each is harmless, please exercise your cyber due diligence before installing or using any software linked from this post.

Quotable

“The tools and technologies we've developed are really the first few drops of water in the vast ocean of what AI can do.”

Fei-Fei Li

Prof (CS @Stanford), Co-Director @StanfordHAI, CoFounder/Chair @ai4allorg, Researcher AI+healthcare

Latest AI News

Has AI Progress Stalled?

Although impressive, recent AI advancements seem much more incremental than at this time last year (spring 2023).

Last spring, OpenAI, the company responsible for ChatGPT, dropped its GPT-4 model, which, until very recently, left all other language models in the dust according to virtually every available benchmark.

Recently, however, that has changed.

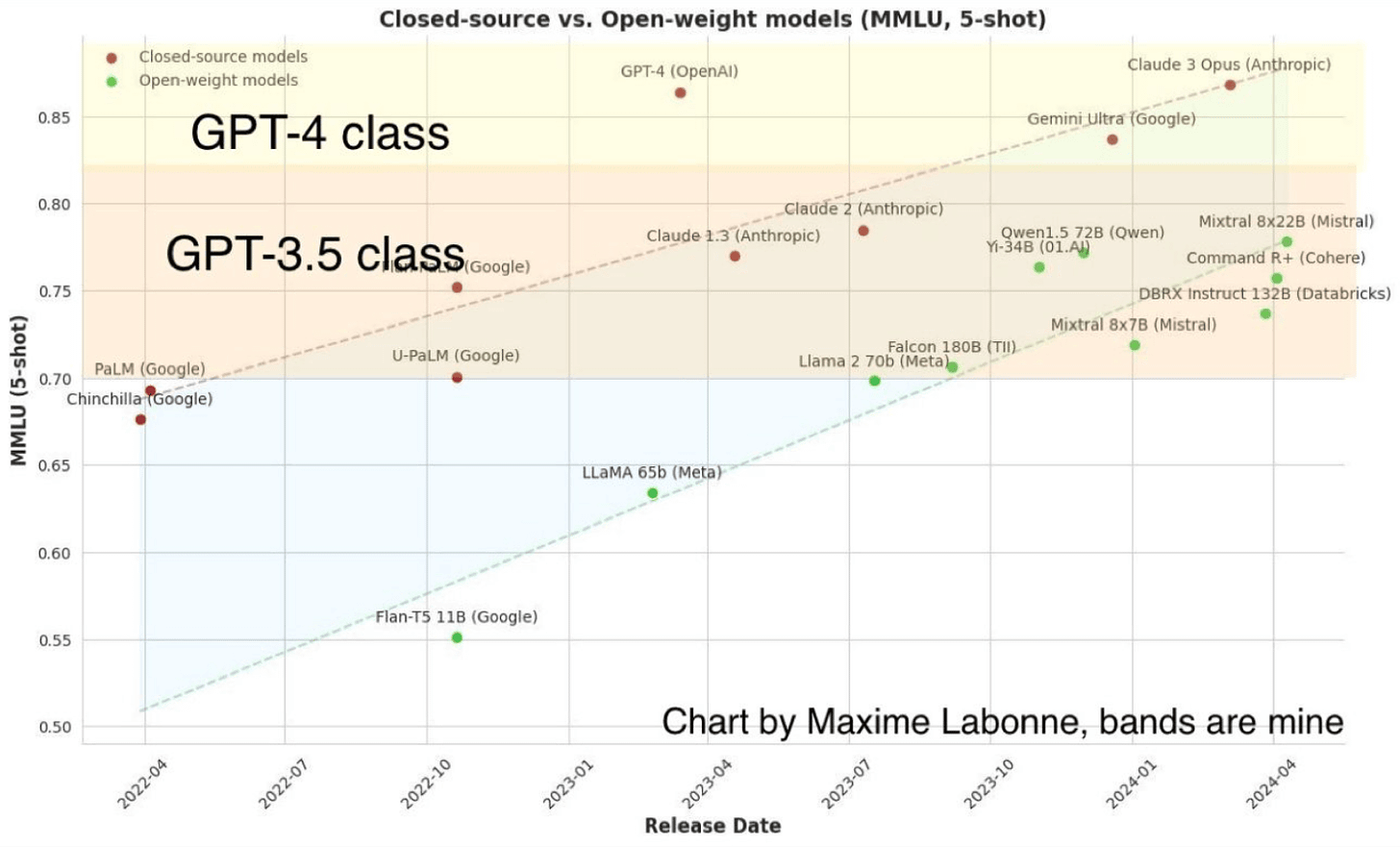

If you look at this chart by Maxime Labonne (further annotated by Professor Ethan Mollick to show the divisions between GPT-3.5 and GPT-4 capable language models), you can see that based on the MMLU benchmark the Claude 3 Opus model from Anthropic and Gemini Ultra from Google are now competing neck and neck with the GPT-4 leader.

What’s more, “open weight” models (although how “open” some of the models are is contested), shown by green dots, are slowly catching up.

To academics and LLM sceptics like Professor Gary Marcus, this is potential proof that LLMs' capabilities have stalled and progress has hit a ceiling, as none of the models have substantially surpassed the now-year-old (since release) GPT-4 model from OpenAI. Some even say we may have been led into an evolutionary dead-end by large language model technology.

Personally, I don’t think so and remain optimistic that multi-modal models will make substantial further leaps in capability this year.

However, one caveat:

I’m betting almost everything on OpenAI’s next generation of models, which is variously expected to be called something innovative like GPT-4.5 or GPT-5, to push the boundaries and prove me right!

Back in November, Sam Altman, OpenAI CEO, announced he was honoured to be “in the room” when the team “push(ed) the veil of ignorance back and the frontier of discovery forward”.

"Four times now in the history of OpenAI, the most recent time was just in the last couple weeks, I've gotten to be in the room, when we sort of push the veil of ignorance back and the frontier of discovery forward, and getting to do that is the professional honor of a lifetime,"

Source: Sam Altman at the Asia-Pacific Economic Cooperation summit.

The next day, the OpenAI board shockingly fired him for reasons still unknown, before he was reinstated a week later and reaped his own revenge back on them.

Around this time, there was also much talk about a new algorithm known as Q* (Q-Star) that OpenAI was allegedly working on. This algorithm was rumoured to have solved some previously hard AI problems, potentially encompassing human-level mathematical reasoning.

Since then, Altman has also said that OpenAI is working on AI “agents” that can autonomously work on problems set for them in the background and report back to the human manager with updates as work on the task progresses.

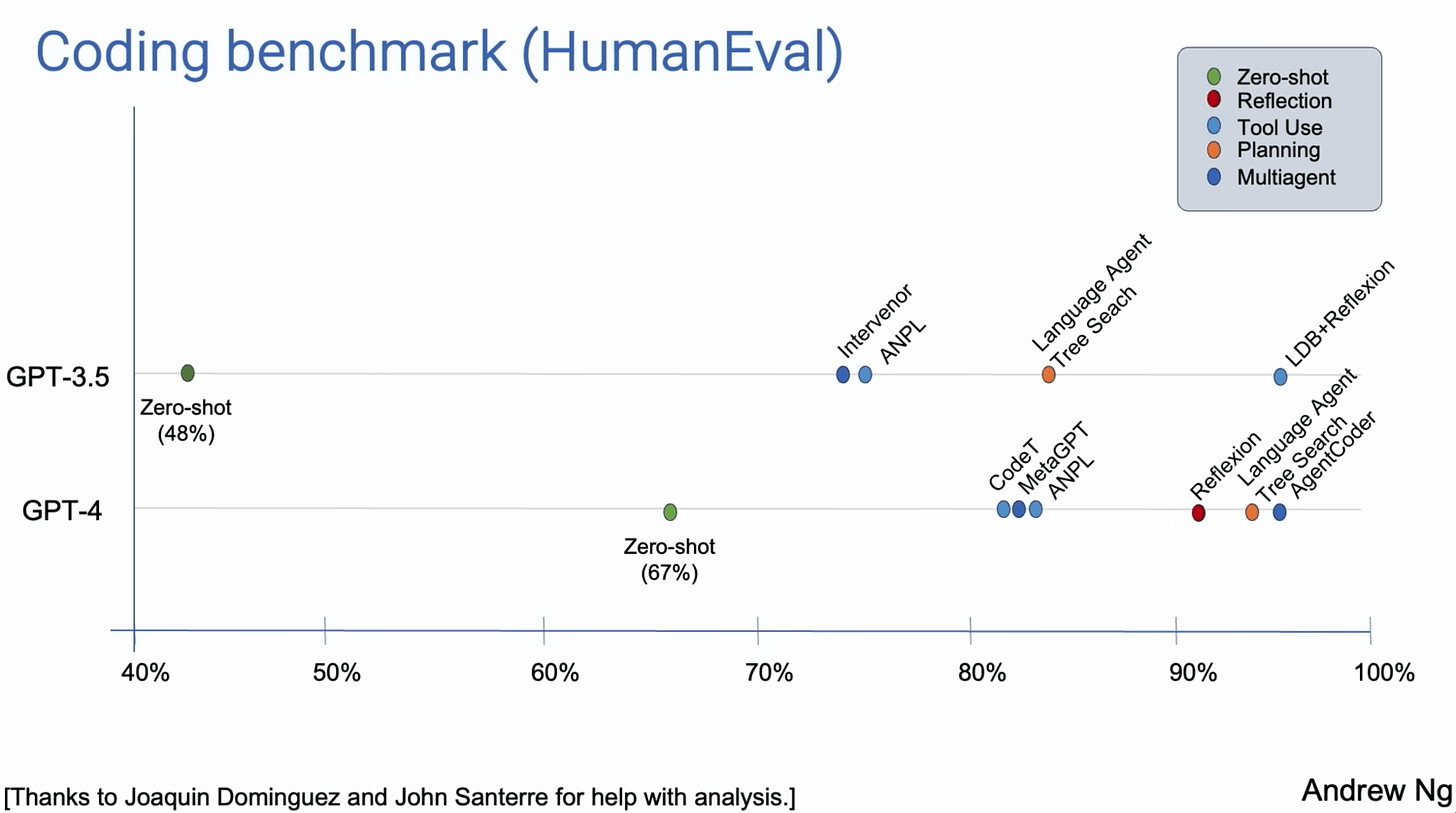

In a recent talk at Sequoia Capital, Andrew Ng of Stanford University and co-founder of Coursera described how even models as crusty as GPT-3.5, by using various agentic workflow architectures (Reflection, Tool Use, Planning, and Multiagent Collaboration), vastly outperformed the much more powerful GPT-4 when using zero-shot.

Pulling all this information together, allowing for OpenAI agents, plus whatever other advances they manage to eke out (larger context memory, better reasoning), means we might all be in for another exponential shift in capabilities soon.

📰 In other news

On the subject of AI agents, Google made GenAI and agents their main focus at the annual Cloud Next ‘24 opening keynote.

They demoed so-called “enterprise-ready” agents based on their Gemini multimodal suite of models for coding, personal assistants, and data agents, as well as the Vertex AI Agent Builder as a playground to build them (albeit reports by some AI YouTubers have not been kind to date).

Model News

Lots have happened in the last week with respect to new model releases and updates.

Here’s a roundup of the most important developments.

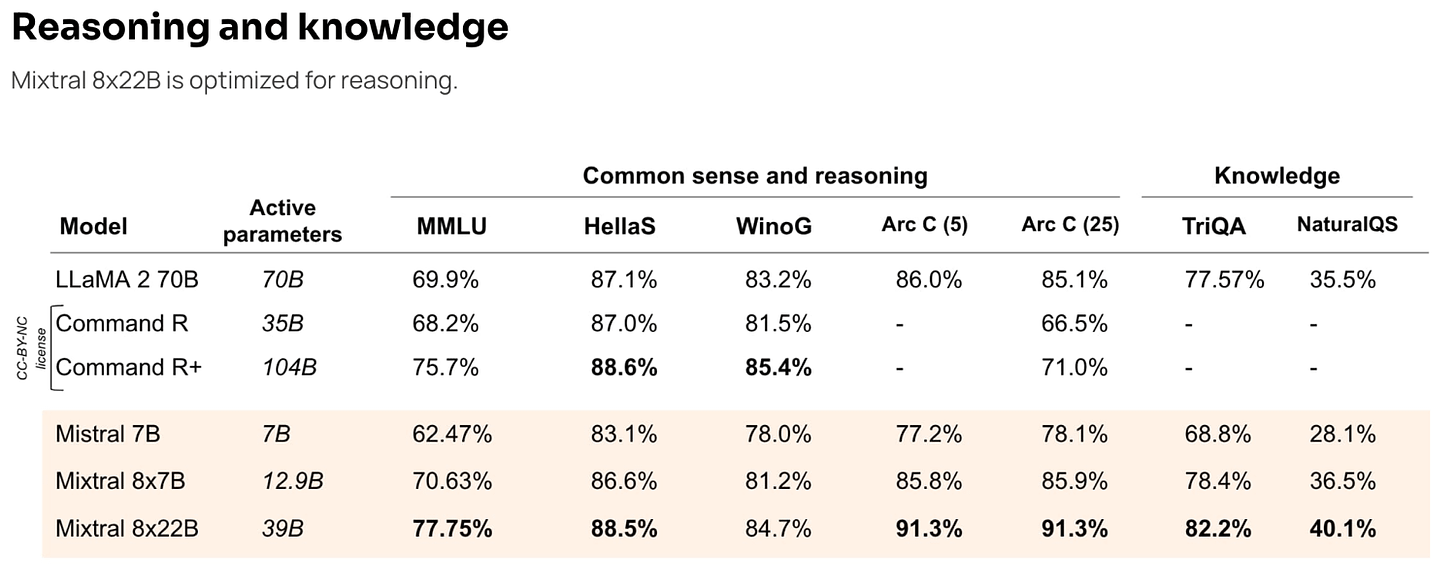

📜 Mixtral 8x22B

https://mistral.ai/news/mixtral-8x22b/

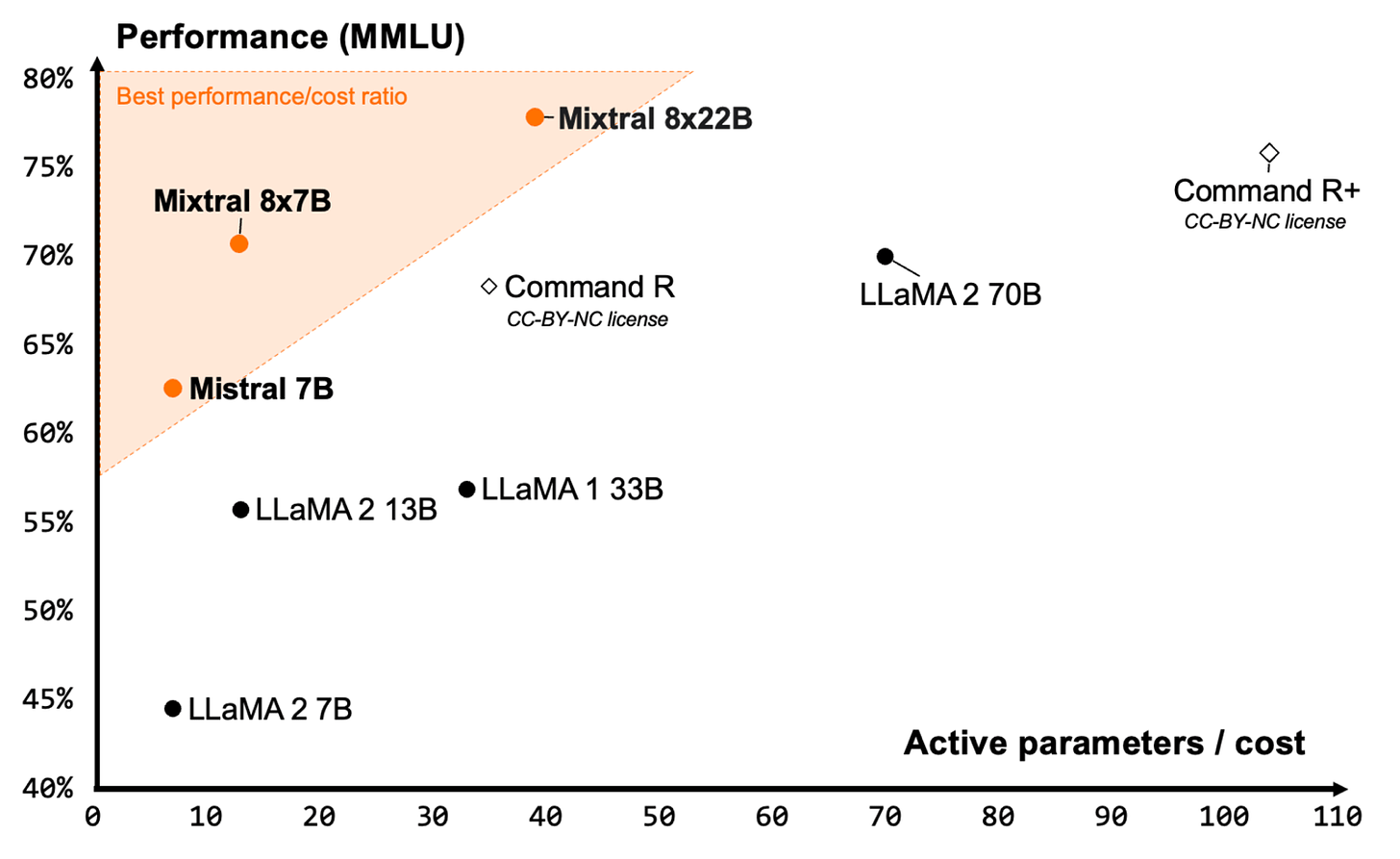

Mixtral 8x22B is the latest open model from French company Mistral.

It sets a new standard for performance and efficiency within the open-source model community and is close to frontier level.

It is a sparse Mixture-of-Experts (SMoE) model that uses only 39B active parameters out of 141B, offering unparalleled cost efficiency for its size.

Mixtral 8x22B is released under Apache 2.0, the most permissive open-source licence, allowing anyone to use the model anywhere without restrictions.

📜 Grok-1.5 Vision Preview

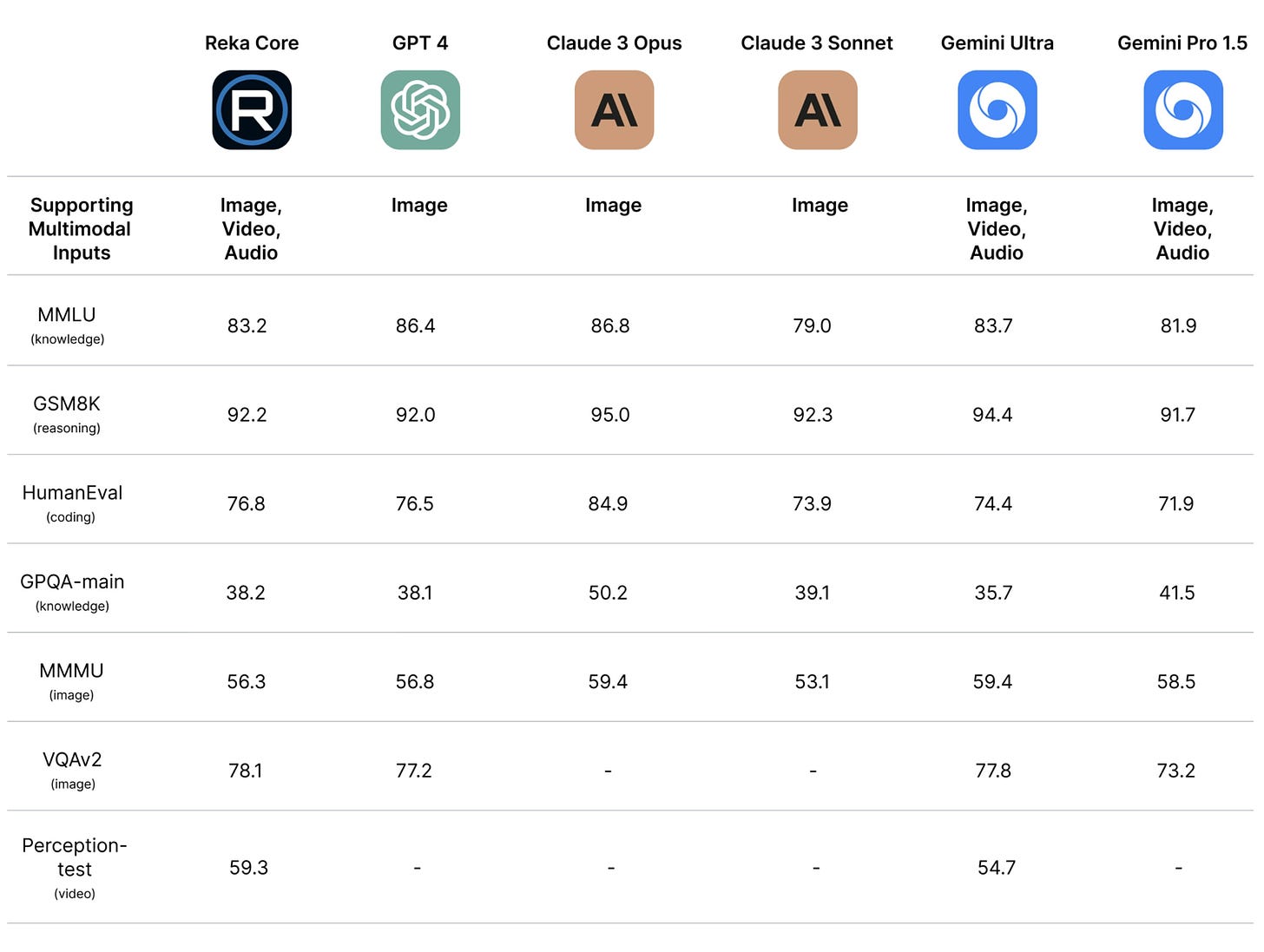

Grok-1.5 Vision Preview is a new “GPT-4 class” multimodal frontier model from Elon Musk’s X.ai.

Grok-1.5V is competitive with existing frontier multimodal models in several domains, ranging from multidisciplinary reasoning to understanding documents, science diagrams, charts, screenshots, and photographs.

Grok excels at understanding the physical world (potentially trained on a ton of real-world Tesla camera data?) and allegedly outperforms its peers in a new RealWorldQA benchmark, which measures real-world spatial understanding. For all datasets below, Grok was evaluated in a zero-shot setting without chain-of-thought prompting.

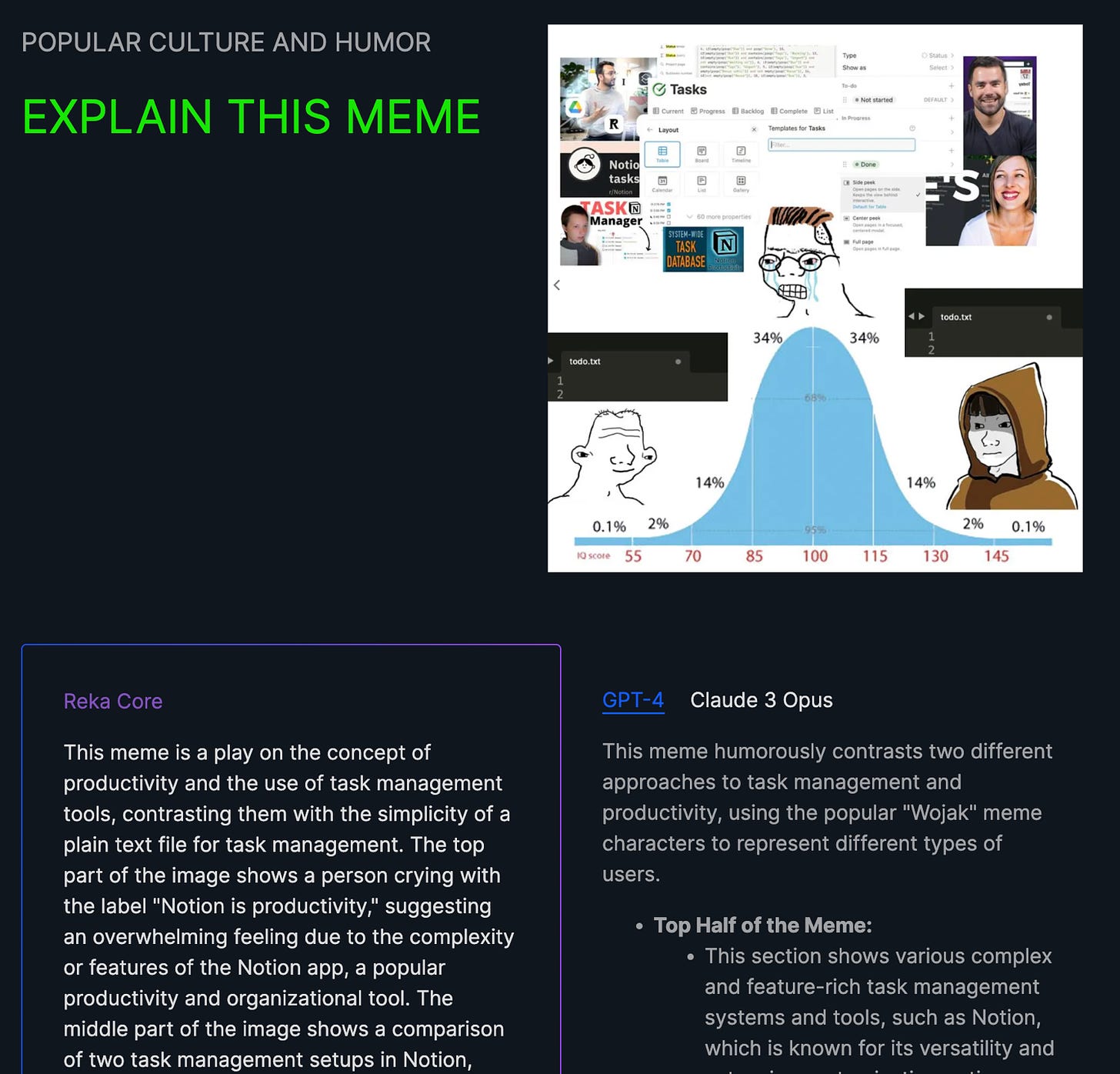

📜 Reka AI Labs - Core, Flash & Edge Models

Reka AI Labs has released a series of multimodal models culminating in a “GPT-4” class frontier model named “Core”, which is competitive with state-of-the-art models.

The model can be accessed via API or deployed onsite and customised with the assistance of the Reka team.

Check the showcase webpage or technical report paper for more detailed information.

Now it’s over to you

That’s all for this week!

Let me know what you found interesting or useful in this week’s digest.

Send me a message or leave a comment below.

Have a great weekend!