Daily Digest 010 - The Countdown to AGI Begins and NVidia Smashes Records With New BlackWell Chip and Exaflop Supercomputer

News, research, hacks, repos and apps from the world of Generative AI.

Hi folks, welcome to edition 010 of the new BotZilla “Daily” Digest format, where I select the most interesting news stories, research papers, GitHub repos, GPTs, tools and apps to help you in your quest to integrate GenAI into your business.

Disclaimer: There are a ton of links in this digest, and while I make every effort to ensure each is harmless, please exercise your cyber due diligence before installing or using any software linked from this post.

Quotable

“This is the most interesting year in human history (2024), except for all future years”

Sam Altman

CEO OpenAI

Latest AI News

The Countdown to AGI Begins

This week, the countdown to AGI took a substantial leap forward as a big AI player announced new tech to support the next generation of AI models.

But, before we get into the weeds of what was announced, I want to ask a question:

Why is there not a greater public and government discourse about the impact of human expert-level AI (let’s call it AGI) appearing in, what appears to be, the very near future?

I’m not talking about Terminator-style killer robots set to destroy humanity.

I’m talking about the type of AI that quietly takes over your job, just like the widespread use of the combine harvester reduced the demand for farm labour in the 20th century.

For example,

Daniel Kokotajlo from OpenAI, recently shared his private thoughts on AGI, see below,

In point 1, Daniel says “Probably there will be AGI soon—literally any year now”. AGI is human expert-level AI.

And in point 2, he says, “ASI” will appear “shortly thereafter—maybe in another year, give or take”

ASI is Artificial Super Intelligence—that is, AI which is thought to have greater abilities than all of humanity put together.

And it might be coming “shortly thereafter” AGI, which in turn is coming any year now!

Seriously, W-T-F!

This is not some random YouTuber. Nor is he the only expert saying this.

Daniel has a PhD (admittedly in Philosophy!;), works at OpenAI, and probably gets to play with AI tech in its research labs long before we see it in public.

For example, GPT-4 was held back for over seven months for “alignment” training before being released to the public.

One definition of AGI is that it can do 99% of currently fully remote jobs. Let’s be honest, if you’re reading this on your work computer, that’s probably you.

And this is what’s generally referred to as “weak” AGI.

Full AGI will also be embodied, aka, contained within a robotic body that can undertake most physical human tasks.

For me, robotics is a societal challenge to manage in the 2030s, but even so, 99% of fully remote (digital) jobs being automate(able) by AI in the next few years will have quite a big impact (and yes, I’m understating) on the economy and society.

But, turn on your TV and virtually no one is talking about this in the mainstream media.

For comparison, imagine if experts predicted an asteroid would hit Earth within 2 years with a 50% probability, but everyone just ignored it. This is the AGI situation today as I see it.

To account for this, I envision 2 scenarios, either,

AGI is a mirage, and we’re still far off from it. AI CEOs know it, and that’s why they are not shouting loudly about it, but want to keep up the hype for investment and valuation purposes

AI IS HAVING A “DON’T-LOOK-UP” MOMENT!

Out of the 2 options, I think it’s the latter that’s the most probable.

New NVidia hardware for nextgen AI models

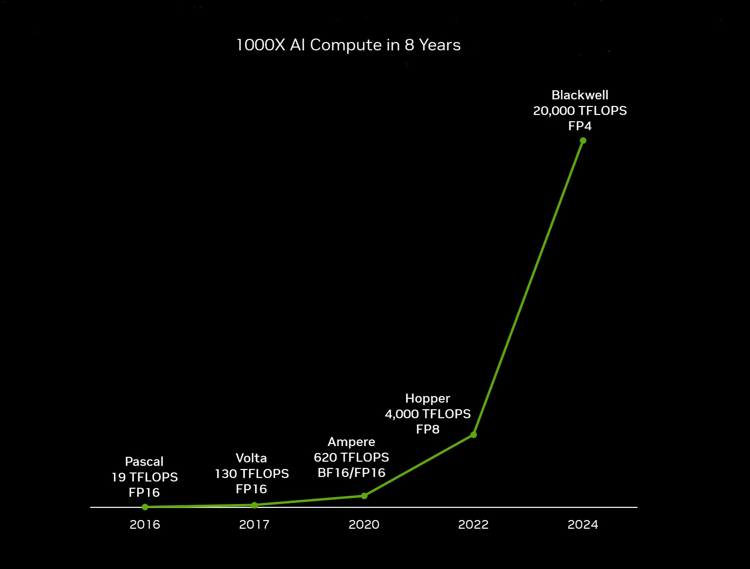

NVidia announced its new Blackwell GPU and DGX platform claiming to have increased compute power by 1000 times in the past 8 years.

If you look at the above graph from NVidia, you can see that the computing power delivered by their leading GPUs has suddenly increased exponentially, and there’s no sign that it’s going to stop anytime soon.

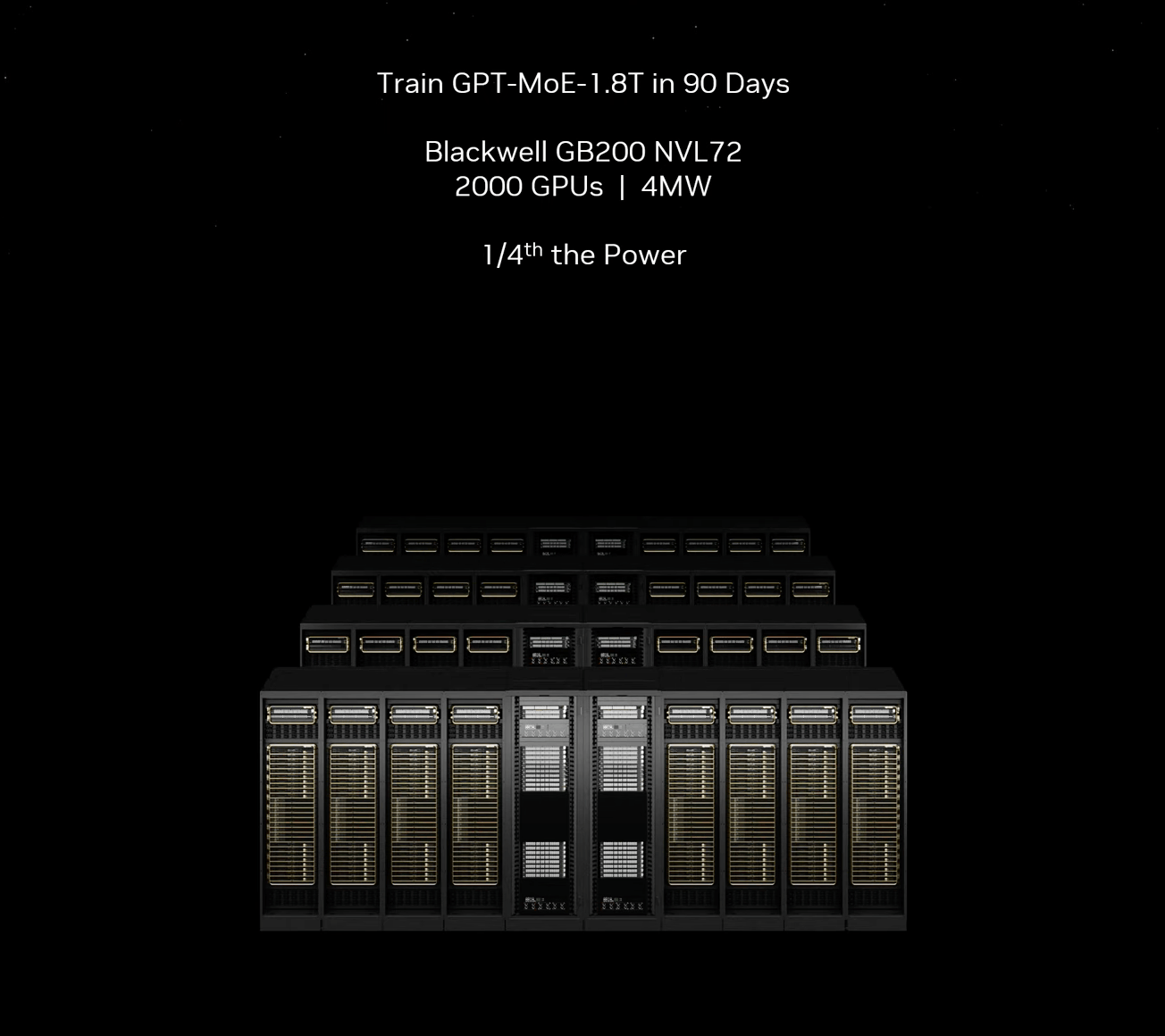

Furthermore, NVidia is releasing its new DGX GB200 supercomputer “in a rack” which is based on multiple Blackwell GPUs communicating together. It claims a single DGX supercomputer can execute 1 exaflop of compute.

To put that into perspective, one exaflop performance is equivalent to achieving a staggering 10^18, or 1,000,000,000,000,000,000 floating point calculations per second.

They also claim that energy consumption has been quartered compared to the previous generation of GPU (Hopper).

Undoubtedly, the Blackwell GPU and DGX supercomputer will be the infrastructure of choice on which the next generation of AI foundational models are going to be pretrained and used for runtime inference.

Today’s, models run on hardware from the 2020-22 era, so the increase in power and reduction in energy costs will be substantial and enable multi-trillion parameter models to proliferate towards the end of this year.

If you want to see what the DGX hardware looks like, click this link, it’s a beast.

If you don’t have the time to watch the whole 2-hour-long NVidia Keynote on YouTube, delivered by CEO Jensen Huang, try using this GPT to summarise and Q&A on the video.

📰 In other news

So much other big stuff happened this week!

Microsoft hires Inflection AI founders

Microsoft hired 2 of the 3 Inflection AI co-founders after investing $1.3 billion in the company less than a year ago. Inflection says it will now pivot to become an “AI studio” where it creates custom generative AI models for commercial customers.

It looks like the wind has been taken out of the sails of the previous foundational AI model-creating company, and there’s little chance the original investments will be recouped.

However, $1.3bn is a drop in the ocean for Microsoft, which acquired two new executives and several of their colleagues to head up the newly formed Microsoft AI unit.

Even though Microsoft also all but controls AI leader OpenAI with its 11-figure investment and infrastructure deal, it must feel exposed in a way that Google and Anthropic don’t, given that both have their own world-class, homegrown AI teams.

Therefore, it’s understandable that they have spread their AI investments and acquisitions wide to bolster their position and counteract any further executive “turbulence” at OpenAI, as happened last November.

Apple in talks with Google about AI licensing deal

Which leads us to Apple.

For me, Apple has been asleep at the wheel of innovation for what seems like a decade or more now.

Although I still prefer their macOS to Windows, and iPhone to any other mobile device, it feels like they’ve been stuck milking the iPhone franchise with incremental improvements, while extracting unreasonably high fees from developers who use their AppStore to bolster their so-called “services” division.

Due to their myopic iPhone/AppStore vision, they completely missed the AI revolution in the last three years and have subsequently been left in the dust by competitors.

Playing catchup, they have taken to negotiating with their rivals, notably Google, to license the Gemini AI engine for use on the iPhone and other devices.

The question of who pays who for using Google’s AI models remains a secret. In the past, Google has paid Apple billions per year just to be the default search engine in Safari’s web browser on the iPhone and other devices.

Although there are rumours that Apple also sought discussions with OpenAI regarding licensing their GPT models, given the decades-old rivalry between Microsoft (who backs OpenAI) and Apple, it’s unlikely to me that they would ever willingly give their old foe a helping hand; therefore, I imagine the talks were more likely a tool to help in the negotiations and play Google’s own hand down.

Time will tell.

And finally …

If you watch any YouTube video or listen to any podcast this week on the topic of AI, I highly recommend you listen to the interview of Sam Altman by Lex, link below.

In it, Sam (somewhat vaguely) discusses GPT-5, Q-Star and other topics in more depth, all of which are likely to have a huge impact on AI progress this year.

As I mentioned in the introduction, it feels like weak AGI systems could emerge any time soon now.

Certainly, it’s odds-on that AGI, which can compete with humans on any digital task, will be available well before 2030.

But will we (the general public) have access to it?

Possibly not.

Why?

Because a powerful AGI that can learn and improve on the fly might well be used to create an ASI (Artificial Super Intelligence). And, as we discussed before, once an ASI evolves, no one knows if it can be controlled, so it's high risk.

But besides the potential risks of intentionally or unintentionally creating an ASI, what company is going to allow public access to its AGI when a competitor could also use it to create its own AGI/ASI?

It’s just not going to happen.

At least, not without powerful guardrails installed and a hefty price tag.

So, for the rest of this year, I think we, the general public, at least, will have to be content with incremental improvements to GPT-4 and other models, which will still be amazing, but not quite the AGI we’ve all been expecting.

Hacks

Welcome to the LLM hacks section, where I track the latest exploits, research and tools to help you build safer GenApps.

☠️ Defense in Depth: An Action Plan to Increase the Safety and Security of Advanced AI

Links: https://www.gladstone.ai/

This article addresses the significant concerns associated with the rapid advancement in artificial intelligence (AI), emphasising the potential development of AI into weapons of mass destruction-like (WMD-like) and WMD-enabling catastrophic risks.

The core issue is identified as the intense competition among top AI labs, all striving to achieve human-level and superhuman artificial general intelligence (AGI) within the current decade. Such ambitions, while pioneering, pose substantial threats to democratic governance and global security due to the transformative implications of AGI.

The risks discussed are of a global nature, characterised by their technical complexity and the swift pace of development, leading to a diminishing window for policymakers to establish technically informed safeguards. These measures are deemed essential for the responsible development and adoption of advanced AI technologies, aiming to address the quickly emerging national security concerns.

Despite recognitions of these risks by frontier lab executives and personnel, competitive pressures are seen to prioritise the enhancement of AI capabilities over the maintenance of safety and security measures.

This dynamic increases the likelihood of the world's most advanced AI systems being appropriated and potentially weaponised against national interests, especially those of the U.S. The article calls attention to the critical need for a balanced approach that safeguards both the pioneering spirit of AI innovation and the security imperatives, underlining the pivotal role of policy intervention in achieving this equilibrium.

Research

Welcome to the LLM Research section, where I track the latest AI research.

🌮 Quiet-STaR: Language Models Can Teach Themselves to Think Before Speaking

Links: https://arxiv.org/pdf/2403.09629.pdf

(As an aside: Is this the legendary Q-Star?)

When writing and talking, people sometimes pause to think.

This paper explores how language models (LMs) can infer unstated rationales in arbitrary text, improving their predictions by generating internal thoughts or rationales. This approach, named Quiet-STaR, is an extension of the Self-Taught Reasoner (STaR) that focuses on generalizing reasoning across a broader range of texts.

The paper addresses the computational challenges involved in implementing Quiet-STaR, proposing solutions such as a tokenwise parallel sampling algorithm and an extended teacher-forcing technique. By generating and evaluating rationales at each token, the model learns to improve predictions for difficult-to-predict tokens and directly answer complex questions without requiring fine-tuning on specific tasks. The improvements are quantitatively demonstrated through zero-shot performance boosts on reasoning tasks like GSM8K and CommonsenseQA.

Key contributions of the work include:

Generalizing STaR for learning reasoning from diverse text data, marking a novel approach to training LMs on general reasoning rather than curated tasks.

A scalable parallel sampling algorithm that generates rationales from all token positions in a given string.

Introduction of custom meta-tokens to signal the start and end of a thought process, aiding the LM in generating more useful rationales.

A mixing head technique that integrates the next-token prediction from a given thought into the current next-token prediction, improving the model's prediction capabilities.

The research opens up new avenues for enhancing language models' reasoning abilities by leveraging the intrinsic reasoning tasks present in language itself, pointing towards more robust and adaptable LMs.

🌮 AutoDev: Automated AI-Driven Development

Links: https://arxiv.org/pdf/2403.08299.pdf

This paper by Michele Tufano and colleagues from Microsoft, discusses an advanced framework aimed at enhancing software development through automation and AI-driven processes. Here's a summary of the key points:

AutoDev Framework: AutoDev is designed to automate complex software engineering tasks by using autonomous AI Agents. These agents can perform a wide range of operations on a codebase, including editing, building, testing, and executing code, as well as handling version control operations. The framework utilizes Docker containers to ensure a secure development environment and allows for fine-grained control over the permitted actions of the AI agents.

Features and Capabilities: The framework offers several innovative features:

Conversation Manager to handle communications between users and AI agents, ensuring smooth progress toward objectives.

Tools Library provides a suite of actions (e.g., file editing, code retrieval, building, and testing) that agents can perform autonomously.

Agent Scheduler coordinates multiple AI agents to work collaboratively on tasks.

Evaluation Environment executes the suggested operations in a secure manner and provides feedback to the AI agents.

Performance Evaluation: AutoDev was tested on the HumanEval dataset, achieving promising results in code and test generation tasks. For code generation, it achieved a Pass@1 rate of 91.5%, and for test generation, it achieved a Pass@1 rate of 87.8%, demonstrating its effectiveness in automating software engineering tasks while maintaining a secure and user-controlled development environment.

Discussion and Future Work: The paper discusses AutoDev's potential for multi-agent collaboration and human-in-the-loop scenarios. Future plans include deeper integration into IDEs, creating a chatbot experience, and incorporating AutoDev into CI/CD pipelines and PR review platforms.

The paper underscores the transformative impact of AI in automating and streamlining software development processes, showcasing the potential of AI-driven frameworks like AutoDev to significantly enhance productivity and efficiency in software engineering tasks.

Now it’s over to you

That’s all for this week!

Let me know what you found interesting or useful in this week’s digest.

Send me a message or leave a comment below.

Have a great weekend!